Vanilla RNNs suffer from:

- Vanishing gradients (small weights → information fades)

- Exploding gradients (large weights → instability)

- Inability to learn long-term dependencies

LSTM was designed specifically to preserve information over long time horizons while remaining trainable with gradient descent.

Key design principle:

Separate memory from computation and control information flow explicitly.

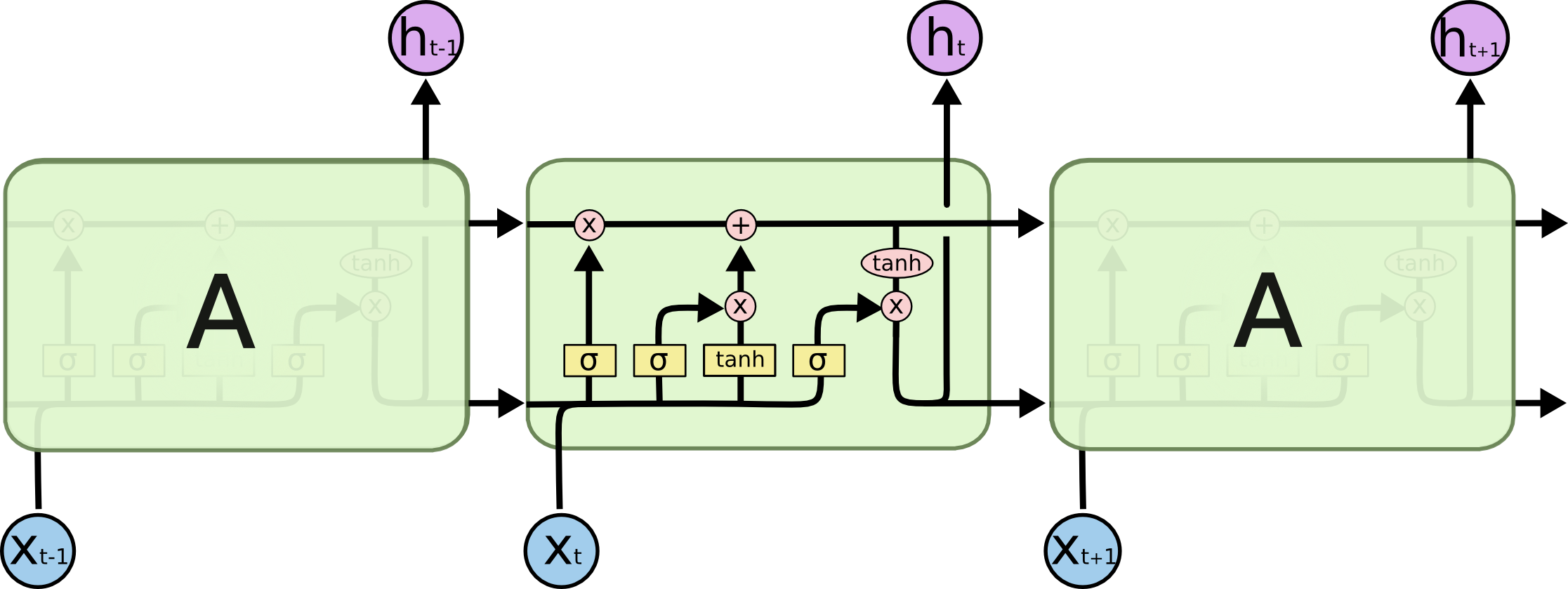

An LSTM cell is not just a neuron. It is a memory container with learnable gates that decide:

- What to forget

- What to store

- What to output

The memory is called the cell state ( c_t ), which flows across time with minimal modification.

This is the critical difference from vanilla RNNs.

An LSTM cell consists of:

-

Cell state ( c_t )

- Long-term memory

- Additive updates → prevents vanishing gradients

-

Hidden state ( h_t )

- Short-term output

- Used for predictions and passed to next layer

-

Three gates (sigmoid-controlled):

- Forget gate

- Input gate

- Output gate

📌 Gates output values in ([0,1]), acting like soft switches.

Let:

- ( x_t ): input at time (t)

- ( h_{t-1} ): previous hidden state

- ( c_{t-1} ): previous cell state

Decides what fraction of previous memory to keep.

- ( f_t = 1 ): keep everything

- ( f_t = 0 ): forget everything

This directly addresses irrelevant long-term memory.

Controls how much new information enters memory.

Candidate memory:

The most important equation in LSTM:

📌 Key Insight:

- Additive update → gradients flow cleanly

- No repeated multiplication → vanishing gradient solved

Controls what part of memory is exposed.

Hidden state:

In vanilla RNN:

Repeated multiplication:

In LSTM:

If ( f_t \approx 1 ), gradient flows unchanged.

Standard LSTM processes sequences left-to-right, using only past context. However, many tasks benefit from both past and future context.

Examples:

- Named Entity Recognition (NER): "Bank (institution) decided to open a new branch (location)"

- Part-of-Speech tagging: Word's tag depends on surrounding words

- Machine translation: Aligning words requires bidirectional understanding

BiLSTM runs two independent LSTM layers:

-

Forward LSTM (

$\overrightarrow{LSTM}$ ): processes left-to-right$$ \overrightarrow{h}t = \overrightarrow{LSTM}(x_t, \overrightarrow{h}{t-1}) $$

-

Backward LSTM (

$\overleftarrow{LSTM}$ ): processes right-to-left $$ \overleftarrow{h}t = \overleftarrow{LSTM}(x_t, \overleftarrow{h}{t+1}) $$

Final representation concatenates both:

Output dimension: 2 × hidden_size

- Parameters: 2× that of unidirectional LSTM

- Computation: 2× forward passes required

- Memory: 2× hidden states stored

BiLSTM requires the entire sequence at once (no streaming):

- ✅ Suitable for batch processing, transcription

- ❌ Not suitable for real-time applications (speech recognition during speaking)

Forward LSTM: x₁ → x₂ → x₃ → x₄

↓ ↓ ↓ ↓

h₁→ h₂→ h₃→ h₄→

Backward LSTM: x₁ ← x₂ ← x₃ ← x₄

↓ ↓ ↓ ↓

h₁← h₂← h₃← h₄←

Output: [h₁→ h₁←] [h₂→ h₂←] [h₃→ h₃←] [h₄→ h₄←]

Single LSTM learns one level of abstraction. Stacking LSTMs creates a hierarchy:

- Layer 1: Low-level patterns (phonemes, character combinations)

- Layer 2: Medium-level patterns (words, sub-phrases)

- Layer 3: High-level semantics (meaning, context)

For

Where:

-

$h_t^{(0)} = x_t$ (input) -

$h_t^{(L)}$ is final prediction

| Aspect | Shallow (1-2 layers) | Deep (4+ layers) |

|---|---|---|

| Capacity | Low | High |

| Computational cost | Low | High |

| Gradient flow | Better | Risk of vanishing gradients |

| Overfitting risk | Low | High (needs regularization) |

| Training time | Fast | Slow |

| Representation power | Limited | Rich hierarchical features |

- 2-3 layers: Standard for most tasks (translation, tagging)

- 4-6 layers: Deep models (large datasets, complex tasks)

- Beyond 6 layers: Rarely beneficial without residual connections or layer normalization

Input Sequence (batch_size × seq_len × embedding_dim)

↓

LSTM Layer 1 (hidden_size=512, bidirectional=True)

↓ [output: batch × seq_len × 1024]

Dropout (p=0.5)

↓

LSTM Layer 2 (hidden_size=512, bidirectional=True)

↓ [output: batch × seq_len × 1024]

Dropout (p=0.5)

↓

LSTM Layer 3 (hidden_size=256, bidirectional=True)

↓ [output: batch × seq_len × 512]

Output layer

LSTM has 3 gates (forget, input, output) and 2 states (cell, hidden). This is complex.

GRU simplifies by:

-

Merging cell and hidden state into single state

$h_t$ - Reducing to 2 gates (reset, update)

- Maintaining gradient flow benefits

- 30-50% fewer parameters than LSTM

Reset gate (what to forget):

Update gate (how much to update):

Candidate activation:

Hidden state update:

| Feature | LSTM | GRU |

|---|---|---|

| Gates | 3 (forget, input, output) | 2 (reset, update) |

| States | 2 (cell, hidden) | 1 (hidden) |

| Parameters | ||

| Computation | Slower | Faster |

| Memory | Higher | Lower |

| Long-term memory | Excellent | Good |

| Empirical performance | Slightly better | Similar on most tasks |

Where

✅ Use GRU when:

- Limited computational resources

- Hardware constraints (mobile, IoT)

- Speed is critical

- Dataset is small-to-medium

✅ Use LSTM when:

- Maximum model capacity needed

- Very long sequences

- Computational resources available

- Need interpretability of cell state

Despite theoretical differences, GRU and LSTM perform comparably on most benchmarks. Choice often depends on engineering constraints rather than accuracy.

Standard LSTM gates depend only on hidden state and input:

They ignore the cell state they're supposed to regulate. This is suboptimal because:

- Forget gate doesn't "see" what it's forgetting

- Input gate doesn't see how full the cell is

- Output gate can't check cell magnitude

Peephole connections let gates directly observe the cell state.

Forget gate (with peephole):

Input gate (with peephole):

Output gate (with peephole):

Where:

-

$\odot$ is element-wise multiplication -

$U_f, U_i, U_o$ are peephole weight vectors (learned) - Note: Output gate uses

$c_t$ (current), not$c_{t-1}$

Peephole connections provide:

- Modest improvement on fine-grained timing tasks

- Better learning dynamics on some sequential problems

- Minimal computational overhead (only element-wise operations)

✅ Effective for:

- Music generation (precise timing matters)

- Digit recognition in images

- Speech recognition

- Tasks requiring precise temporal alignment

❌ Less critical for:

- Language modeling

- Machine translation

- Most NLP tasks

In vanilla RNN:

Repeated matrix multiplication causes:

-

Vanishing gradients:

$(W_{hh})^T$ has spectral radius$< 1$ -

Exploding gradients:

$(W_{hh})^T$ has spectral radius$> 1$

In LSTM, the cell state has additive updates:

Gradient w.r.t. cell state:

Key insight:

If

- Gradients flow unchanged through time

- No exponential decay or explosion

- Information preserved over hundreds of steps

Vanilla RNN:

┌─────────────┐

│ h₀ │

└────┬────────┘

│ × W

↓

┌─────────────┐

│ h₁ │ ← Gradient is multiplied by W at each step

└────┬────────┘

│ × W

↓

┌─────────────┐

│ h₂ │ ← W^t → 0 or ∞

└──────────────┘

LSTM (cell state path):

┌─────────────┐

│ c₀ │

└────┬────────┘

│ × f₁ (forget gate)

↓

┌─────────────┐

│ c₁ │ ← If f ≈ 1, gradient passes through unchanged

└────┬────────┘

│ × f₂ (forget gate)

↓

┌─────────────┐

│ c₂ │ ← Gradient accumulates additively, not exponentially

└──────────────┘

For LSTM, gradient from loss to early cell state:

The sum replaces product, preventing vanishing gradients.

✅ LSTM can learn dependencies 200+ timesteps apart

✅ Gradient norm remains stable throughout training

✅ Forget gate naturally learns to set

Although LSTM prevents vanishing gradients on the cell state path:

- Hidden state gradients can still vanish (hidden state is not purely additive)

- Deep stacked LSTMs benefit from layer normalization or residual connections

Many problems involve:

- Variable-length input

- Variable-length output

- No one-to-one alignment

Examples:

- Machine translation

- Speech-to-text

- Text summarization

- Question answering

- Video captioning

Traditional feedforward or RNN models cannot handle this flexibly.

Encode the input sequence into a fixed-size representation, then decode it into another sequence.

The encoder reads the entire input sequence and compresses it into:

- A context vector

- Often the final hidden state ( h_T ) (and cell state ( c_T ) in LSTM)

Given input sequence: [ (x_1, x_2, ..., x_T) ]

The encoder LSTM computes: [ (h_1, c_1), (h_2, c_2), ..., (h_T, c_T) ]

Final states:

- ( h_T ): summary of sequence

- ( c_T ): long-term memory

These are passed to the decoder.

❌ Encoder does not output predictions ✅ Encoder outputs a latent representation

The decoder:

- Generates output one step at a time

- Uses encoder’s final state as initialization

- Predicts next token conditioned on previous outputs

Initial states:

At time (t):

Output probability:

Training (Teacher Forcing):

- Ground truth previous token is used

- Faster convergence

Inference:

- Model’s own prediction is fed back

- Error accumulation possible

a more detailed architecture is

- Encoder reads input sequence

- Final encoder states summarize input

- Decoder initializes from encoder

- Decoder generates output sequentially

Input:

"I am a student"

Encoder produces:

Context vector

Decoder generates:

"Je suis un étudiant"

One token at a time.

❌ Fixed-size context vector ❌ Information bottleneck for long sequences

This led to Attention Mechanisms, which allow the decoder to look back at all encoder states.

Instead of using only ( h_T ):

Where:

- ( \alpha_{ti} ) are attention weights

This is the foundation of Transformers.

| Misconception | Correction |

|---|---|

| LSTM remembers everything | It learns what to remember |

| Gates are hard switches | Gates are differentiable |

| Encoder outputs prediction | Encoder outputs representation |

| LSTM fully solves long memory | Attention does better |

| LSTM is obsolete | Still used in low-latency systems |

- Neural machine translation

- Speech recognition

- Time-series forecasting

- Video captioning

- Text summarization

- Anomaly detection

| Component | Purpose |

|---|---|

| LSTM Cell | Controlled memory |

| Gates | Regulate information |

| Encoder | Compress sequence |

| Decoder | Generate sequence |

| Teacher forcing | Stable training |

| Attention | Remove bottleneck |

LSTM introduced learnable memory control, and Encoder–Decoder architectures enabled sequence-to-sequence learning, forming the conceptual bridge from RNNs to modern Transformer-based models.