We are currently using Determined AI to manage our GPU Cluster.

You can open the dashboard (a.k.a WebUI) by the following URL and log in:

Determined is a successful (acquired by Hewlett Packard Enterprise in 2021) open-source deep learning training platform that helps researchers train models more quickly, easily share GPU resources, and collaborate more effectively. Its architecture is shown below:

You need to ask the system admin to get your user account.

The WebUI will automatically redirect users to a login page if there is no valid Determined session established on that browser. After logging in, the user will be redirected to the URL they initially attempted to access.

Users can also interact with Determined using a command-line interface (CLI). The CLI is distributed as a Python wheel package; once the wheel has been installed, the CLI can be used via the det command.

You can use the CLI either on the login node or on your local development machine.

-

Installation

The CLI can be installed via pip:

pip install determined

-

Configure environment variable

If you are using your own PC, you need to add the environment variable

DET_MASTER=10.0.1.66.P.S. For some historical reasons, if you are using VPN. you should set this to

DET_MASTER=10.0.1.68.For Linux, *nix including macOS, if you are using

bashappend this line to the end of~/.bashrc(most systems) or~/.bash_profile(some macOS);If you are using

zsh, append it to the end of~/.zshrc:export DET_MASTER=10.0.1.66For Windows, you can follow this tutorial: tutorial

-

Log in

In the CLI, the user login subcommand can be used to authenticate a user:

det user login <username>

Note: If you did not configure the environment variable, you need to specify the master's IP:

det -m 10.0.1.66 user login <username>

P.S. If you are using VPN. you should use

det -m 10.0.1.68 user login <username>.

Users have blank passwords by default. If desired, a user can change their own password using the user change-password subcommand:

det user change-passwordHere is a template of a task configuration file, in YAML format:

description: <task_name>

resources:

slots: 1

resource_pool: 32c64t_256_3090

shm_size: 4G

bind_mounts:

- host_path: /workspace/<user_name>/

container_path: /run/determined/workdir/home/

- host_path: /workspace/<user_name>/.cache/

container_path: /run/determined/workdir/.cache/

- host_path: /datasets/

container_path: /run/determined/workdir/data/

- host_path: /SSD/

container_path: /run/determined/workdir/ssd_data/

environment:

image: determinedai/environments:cuda-11.3-pytorch-1.10-lightning-1.5-tf-2.8-deepspeed-0.5.10-gpu-0.18.2Notes:

- You need to change the

task_nameanduser_nameto your own - Number of

resources.slotsis the number of GPUs you are requesting to use, which is set to1here resources.resource_poolis the resource pool you are requesting to use. Currently we have three resource pools:32c64t_256_3090,48c96t_512_3090and64c128t_512_4090.resources.shm_sizeis set to4Gby default. You may need a greater size if you use multiple dataloader workers in pytorch, etc.- In

bind_mounts, the first host_path/container_path maps your workspace directory (/workspace/<user_name>/) into the container; And the second maps the dataset directory (/datasets) into the container. - In

environment.image, an official image by Determined AI is used. Determined AI provides Docker images that include common deep-learning libraries and frameworks. You can also develop your custom image based on your project dependency, which will be discussed in this tutorial: Custom Containerized Environment - How

bind_mountsworks:

Click "Launch JupyterLab" in the upper left corner of the Determined Web UI:

When the sidebar is collapsed the button becomes like this:

In the popped out dialog window, select show full config:

Then enter your YAML configuration and hit Launch!

Save the YAML configuration to, let's say, test_task.yaml. You can start a Jupyter Notebook (Lab) environment or a simple shell environment. A notebook is a web interface and thus more user-friendly. However, you can use Visual Studio Code or PyCharm and connect to a shell environment[3], which brings more flexibility and productivity if you are familiar with these editors.

For notebook:

det notebook start --config-file test_task.yamlFor shell:

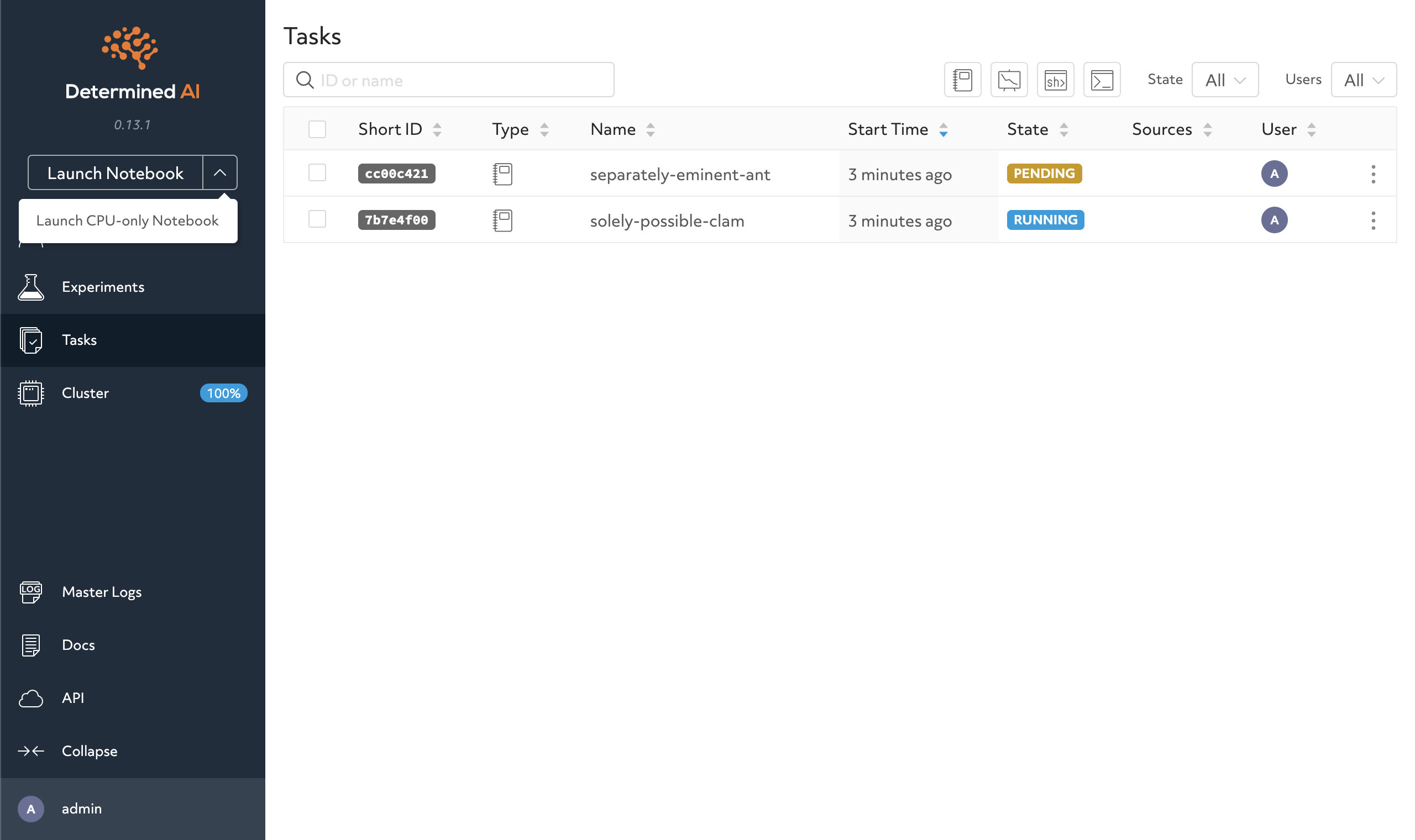

det shell start --config-file test_task.yamlNow you can see your task pending/running on the WebUI dashboard.

You can manage the tasks on the WebUI.

You are encouraged to check out more operations of Determined.AI in the API docs, e.g.,

det taskdet shell open [task id]det shell kill [task id]

You can use Visual Studio Code or PyCharm to connect to a shell task.

You also need to install and use determined on your local computer, in order to get the SSH IdentityFile.

-

First, you need to install the Remote-SSH plugin.

-

Check the UUID of your tasks:

det shell list

-

Get the ssh command for the task with the UUID above (it also generates an SSH IdentityFile on your PC):

det shell show_ssh_command <UUID>

The results should follow this pattern:

ssh -o "ProxyCommand=<YOUR PROXY COMMAND>" \ -o StrictHostKeyChecking=no \ -tt \ -o IdentitiesOnly=yes \ -i <YOUR KEY PATH> \ -p <YOUR PORT NUMBER> \ <YOUR USERNAME>@<YOUR SHELL HOST NAME (UUID)>

-

Add the shell task as a new SSH task:

Click the SSH button on the left-bottom corner:

Select connect to host -> +Add New SSH Host:

Paste the SSH command generated by

det shell show_ssh_commandabove in to the dialog window:Then choose your ssh configuration file to update:

You can continue to edit your ssh configuration file, e.g. add a custom name:

-

Check the UUID of your tasks:

det shell list

-

Get the new ssh command:

det shell show_ssh_command <UUID>

-

Replace the old UUID with the new one (with

Ctrl + H):

-

As of the current version, PyCharm lacks support for custom options in SSH commands via the UI. Therefore, you must provide via an entry in your

ssh_configfile. You can generate this entry by following the steps in First-time setup of connecting VS Code to a shell task. -

In PyCharm, open Settings/Preferences > Tools > SSH Configurations.

-

Select the plus icon to add a new configuration.

-

Enter

YOUR SHELL HOST NAME (UUID),YOUR PORT NUMBER(fill in22here), andYOUR USERNAMEin the corresponding fields. (P.S. you can chageYOUR SHELL HOST NAME (UUID)into your custom one configured in the SSH config identity, e.g.TestEnv, as shown above) -

Switch the Authentication type dropdown to OpenSSH config and authentication agent.

-

You can hit

Test Connectionto test it. -

Save the new configuration by clicking OK. Now you can continue to add Python Interpreters with this SSH configuration.

You will need do the port forwarding from the task container to your personal computer through the SSH tunnel (managed by the determined) when you want to set up services like tensorboard, etc, in your task container.

Here is an example. First launch a notebook or shell task with the proxy_ports configurations:

description: nerfstudio-test

resources:

slots: 1

resource_pool: 32c64t_256_3090

shm_size: 4G

bind_mounts:

- host_path: /workspace/<user_name>/

container_path: /run/determined/workdir/home/

- host_path: /workspace/<user_name>/.cache/

container_path: /run/determined/workdir/.cache/

- host_path: /datasets/

container_path: /run/determined/workdir/data/

- host_path: /SSD/

container_path: /run/determined/workdir/ssd_data/

environment:

image: harbor.cvgl.lab/library/zlz-nerfstudio:v1.1.3-gsplat_880425c-cu118-ubuntu2204-torch2.1.2_cxx11abi-240725

proxy_ports:

- proxy_port: 7007

proxy_tcp: truewhere where 7007 is the websocket port used by nerfstudio's vistualization, and the bind_mount with .cache is used to store pytorch cache files so that it won't download the pretrained weights again and again.

P.S. You can also try out

sdfstudioby usingharbor.cvgl.lab/library/zlz-sdfstudio:nightly-cuda-11.8-devel-ubuntu22.04-torch-1.14.0a0_44dac51-230720inenvironment.image.

Then run a nerfstudio training in the terminal of your notebook or shell task:

ns-train nerfacto --data ~/data/nerfstudio/nerfstudio/poster/Then launch port forwarding on you personal computer with this command:

python -m determined.cli.tunnel --listener 7007 --auth 10.0.1.66 YOUR_TASK_UUID:7007P.S. If you are using VPN. you should use

10.0.1.68instead of10.0.1.66.

Remember to change YOUR_TASK_UUID to your task's UUID.

Finally you can open the URL given in the nerfstudio's terminal with your browser, in my case it's https://viewer.nerf.studio/versions/23-05-01-0/?websocket_url=ws://localhost:7007

P.S. for tensorboard, just change the port

7007to6006.

Reference: Exposing custom ports - Determined AI docs

(TODO)

You can check the realtime utilization of the cluster in the grafana dashboard.