diff --git a/LICENSE b/LICENSE

index 5798ff9..22d8e6f 100644

--- a/LICENSE

+++ b/LICENSE

@@ -1,6 +1,6 @@

MIT License

-Copyright (c) 2025 饰乐

+Copyright (c) 2024 Les Freire

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

diff --git a/README.md b/README.md

new file mode 100644

index 0000000..6d97efe

--- /dev/null

+++ b/README.md

@@ -0,0 +1,71 @@

+

+

+

+

+

+# astrbot_plugin_parser

+

+_✨ 链接解析器 ✨_

+

+[](https://opensource.org/licenses/MIT)

+[](https://www.python.org/)

+[](https://github.com/Soulter/AstrBot)

+[](https://github.com/Zhalslar)

+

+

+

+## 📖 介绍

+

+| 平台 | 触发的消息形态 | 视频 | 图集 | 音频 |

+| ------- | --------------------------------- | ---- | ---- | ---- |

+| B 站 | av 号/BV 号/链接/短链/卡片/小程序 | ✅ | ✅ | ✅ |

+| 抖音 | 链接(分享链接,兼容电脑端链接) | ✅ | ✅ | ❌️ |

+| 微博 | 链接(博文,视频,show, 文章) | ✅ | ✅ | ❌️ |

+| 小红书 | 链接(含短链)/卡片 | ✅ | ✅ | ❌️ |

+| 快手 | 链接(包含标准链接和短链) | ✅ | ✅ | ❌️ |

+| acfun | 链接 | ✅ | ❌️ | ❌️ |

+| youtube | 链接(含短链) | ✅ | ❌️ | ✅ |

+| tiktok | 链接 | ✅ | ❌️ | ❌️ |

+| twitter | 链接 | ✅ | ✅ | ❌️ |

+

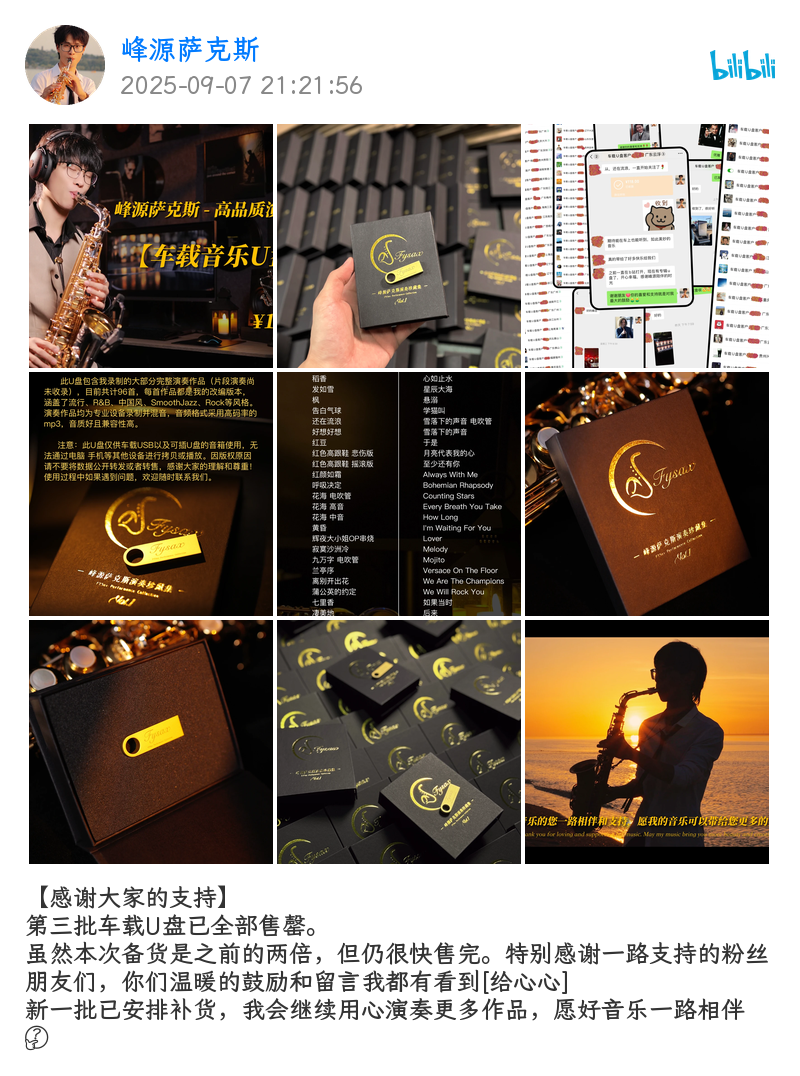

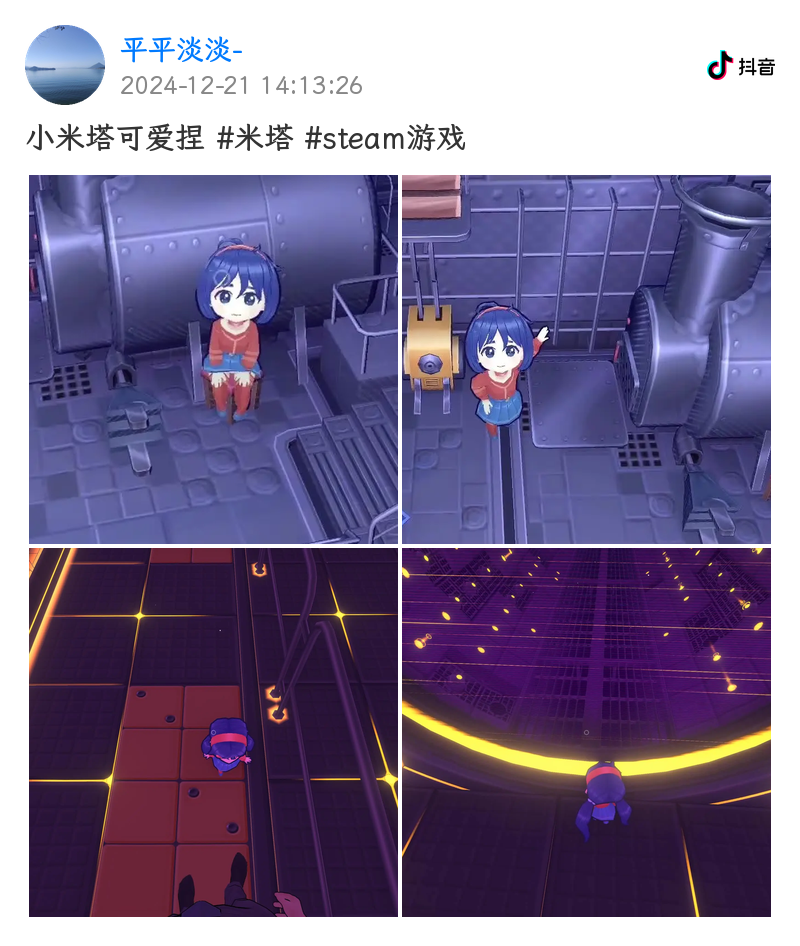

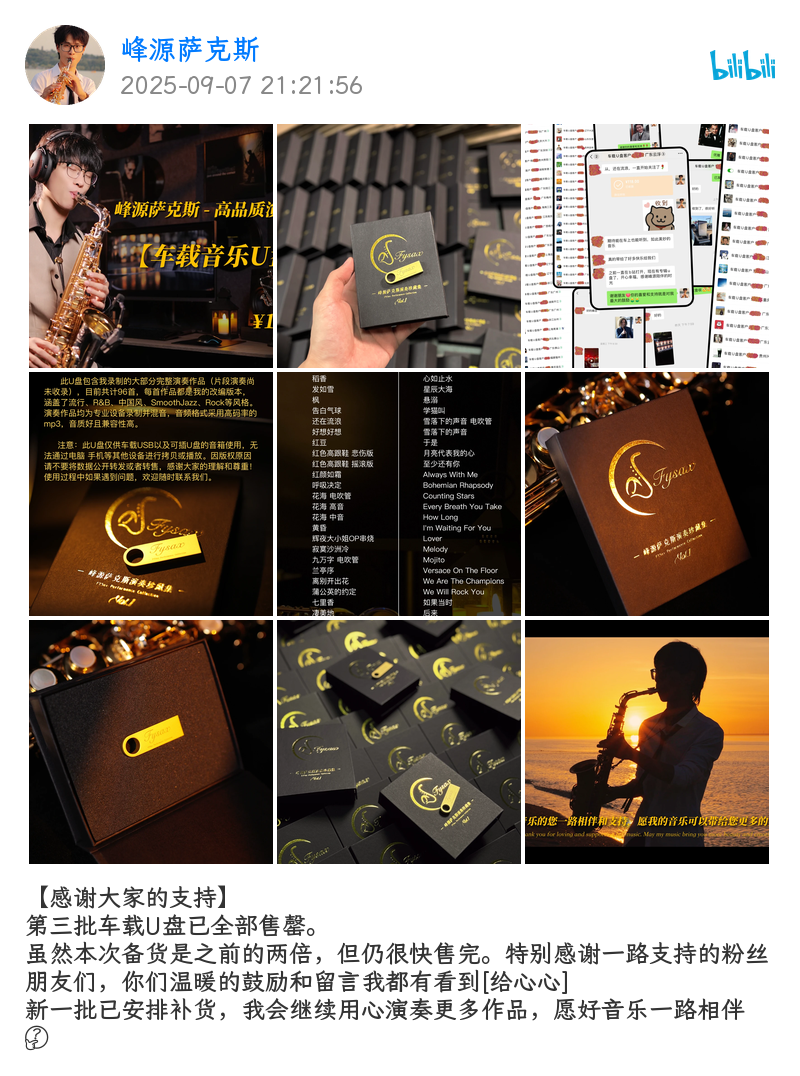

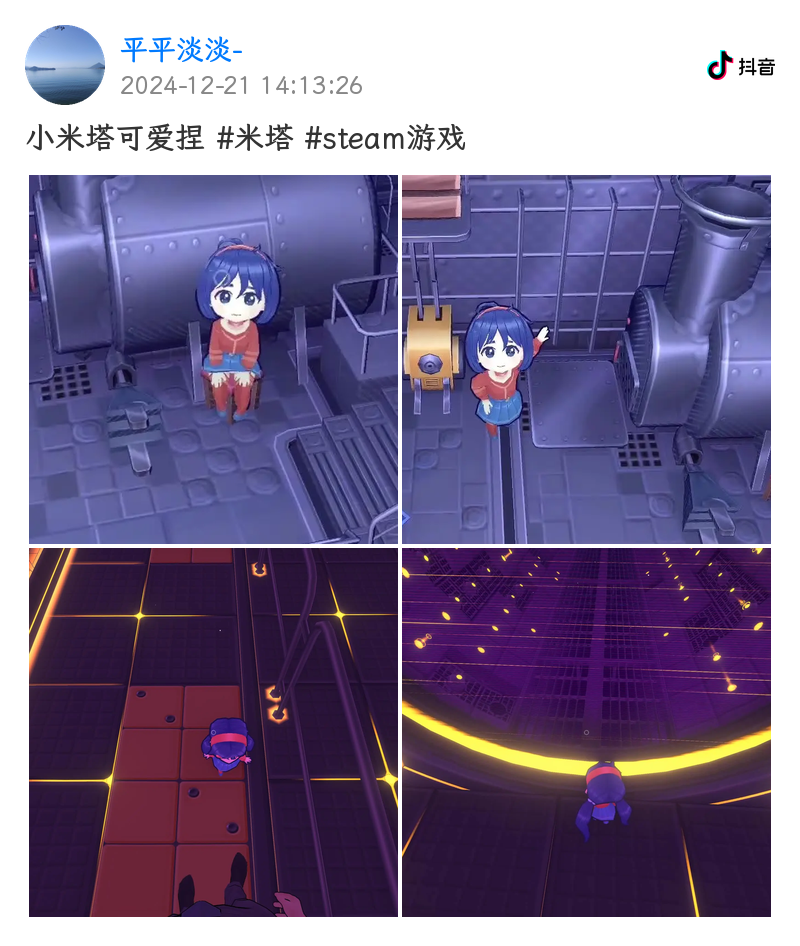

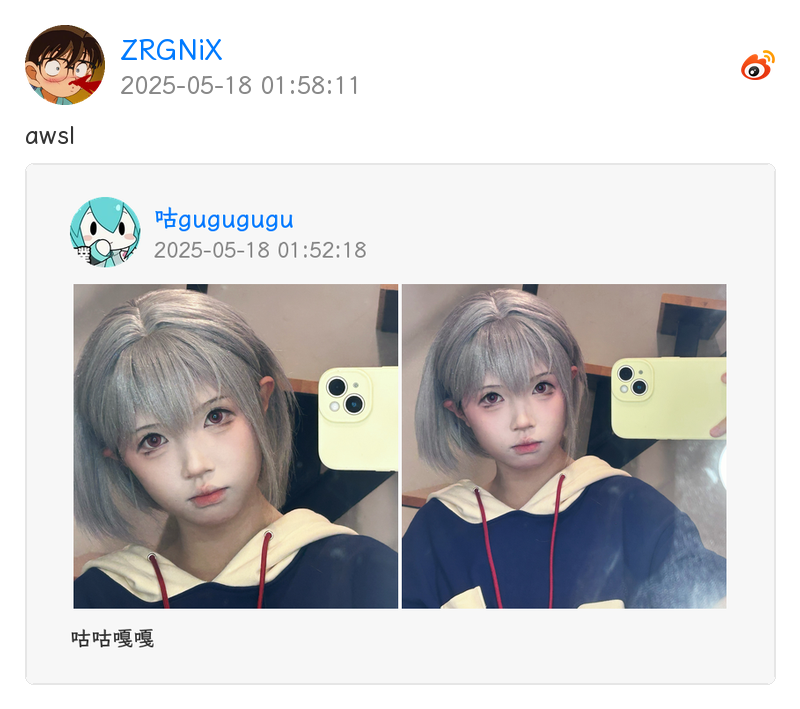

+## 🎨 效果图

+

+插件默认启用 PIL 实现的通用媒体卡片渲染,效果图如下

+

+

+

+## 💿 安装

+

+直接在astrbot的插件市场搜索astrbot_plugin_parser,点击安装,等待完成即可

+

+## ⚙️ 配置

+

+请在astrbot的插件配置面板查看并修改

+

+## 🎉 使用

+

+| 指令 | 权限 | 说明 |

+| :------: | :-------------------: | :---------------: |

+| 开启解析 | ADMIN | 开启解析 |

+| 关闭解析 | ADMIN | 关闭解析 |

+| bm | - | 下载 B 站音频 |

+| ym | - | 下载 youtube 音频 |

+| blogin | ADMIN | 扫码获取 B 站凭证 |

+

+## 🧩 扩展

+

+插件支持自定义解析器,通过继承 `BaseParser` 类并实现 `platform`, `handle` 即可。

+

+示例解析器请看 [示例解析器](https://github.com/Zhalslar/astrbot_plugin_parser/blob/main/core/parsers/example.py)

+

+## 🎉 致谢

+

+本项目核心代码来自[nonebot-plugin-parser](https://github.com/fllesser/nonebot-plugin-parser),请前往原仓库给作者点个Star!

diff --git a/_conf_schema.json b/_conf_schema.json

new file mode 100644

index 0000000..db148ea

--- /dev/null

+++ b/_conf_schema.json

@@ -0,0 +1,160 @@

+{

+ "disabled_sessions": {

+ "description": "关闭解析的会话",

+ "type": "list",

+ "hint": "在会话中使用命令 “开启解析” 和 “关闭解析” 来设置某会话的解析状态",

+ "default": []

+ },

+ "enable_platforms": {

+ "description": "启用解析的平台",

+ "type": "list",

+ "hint": "",

+ "options": [

+ "A站",

+ "B站",

+ "微博",

+ "小红书",

+ "抖音",

+ "快手",

+ "NGA",

+ "TikTok",

+ "推特",

+ "油管"

+ ],

+ "default": [

+ "A站",

+ "B站",

+ "微博",

+ "小红书",

+ "抖音",

+ "快手",

+ "NGA",

+ "TikTok",

+ "推特",

+ "油管"

+ ]

+ },

+ "forward_contents": {

+ "description": "转发媒体内容",

+ "type": "bool",

+ "hint": "是否将解析到的图片/视频/音频作为合并转发消息发送",

+ "default": true

+ },

+ "upload_audio": {

+ "description": "上传音频文件",

+ "type": "bool",

+ "hint": "是否将解析到的音频文件上传到群文件",

+ "default": false

+ },

+ "max_size": {

+ "description": "资源最大大小",

+ "type": "int",

+ "hint": "允许下载的音视频最大体积,单位 MB",

+ "default": 90

+ },

+ "max_duration": {

+ "description": "资源最大时长",

+ "type": "int",

+ "hint": "允许下载的音视频最大时长,单位秒",

+ "default": 480

+ },

+ "download_timeout": {

+ "description": "下载请求超时时间",

+ "type": "int",

+ "hint": "下载视频、音频等较大文件时的请求超时时间,单位秒。 建议设置大一点,视频、音频较大时下载耗时较长",

+ "default": 280

+ },

+ "common_timeout": {

+ "description": "普通请求超时时间",

+ "type": "int",

+ "hint": "普通请求超时时间,单位秒。用于一些普通的请求 ",

+ "default": 15

+ },

+ "bili_ck": {

+ "description": "Bilibili Cookies",

+ "type": "text",

+ "hint": "用于B站解析的登录Cookies,留空则使用无登录状态",

+ "default": ""

+ },

+ "bili_video_codecs": {

+ "description": "B站视频编码",

+ "type": "string",

+ "hint": "优先下载的编码类型,可选:AVC、AV1、HEV",

+ "options": [

+ "AVC",

+ "AV1",

+ "HEV"

+ ],

+ "default": "AVC"

+ },

+ "bili_video_quality": {

+ "description": "B站视频分辨率",

+ "type": "string",

+ "hint": "下载B站视频的分辨率",

+ "options": [

+ "_360P",

+ "_480P",

+ "_720P",

+ "_1080P",

+ "_1080P_PLUS",

+ "_1080P_60",

+ "_4K",

+ "HDR",

+ "DOLBY",

+ "_8K",

+ "AI_REPAIR"

+ ],

+ "default": "_720P"

+ },

+ "ytb_ck": {

+ "description": "YouTube Cookies",

+ "type": "text",

+ "hint": "用于YouTube解析的登录Cookies,留空则使用无登录状态",

+ "default": ""

+ },

+ "proxy": {

+ "description": "代理地址",

+ "type": "string",

+ "hint": "如 http://127.0.0.1:7890,留空则直连。仅作用于 youtube, tiktok 解析",

+ "default": ""

+ },

+ "emoji_cdn": {

+ "description": "Pilmoji 表情 CDN",

+ "type": "string",

+ "hint": "渲染表情使用的 CDN 地址,一般无需修改",

+ "default": "https://cdn.jsdelivr.net/npm/emoji-datasource-facebook@14.0.0/img/facebook/64/"

+ },

+ "emoji_style": {

+ "description": "Pilmoji 表情样式",

+ "type": "string",

+ "hint": "可选:APPLE、FACEBOOK、GOOGLE、TWITTER",

+ "options": [

+ "APPLE",

+ "FACEBOOK",

+ "GOOGLE",

+ "TWITTER"

+ ],

+ "default": "FACEBOOK"

+ },

+ "clean_cron": {

+ "description": "自动清理缓存的触发周期",

+ "type": "string",

+ "hint": "使用 Cron 表达式(分 时 日 月 周)定义。例如:“30 2 * * *” 表示每天 2:30 。留空表示禁用自动清理",

+ "default": "30 2 * * *"

+ },

+ "data_dir": {

+ "description": "数据目录",

+ "type": "string",

+ "invisible": true

+ },

+ "cache_dir": {

+ "description": "缓存目录",

+ "type": "string",

+ "invisible": true

+ },

+ "ytb_cookies_file": {

+ "description": "YouTube Cookies 文件",

+ "type": "string",

+ "invisible": true

+ }

+}

\ No newline at end of file

diff --git a/core/__init__.py b/core/__init__.py

new file mode 100644

index 0000000..e69de29

diff --git a/core/clean.py b/core/clean.py

new file mode 100644

index 0000000..b44627b

--- /dev/null

+++ b/core/clean.py

@@ -0,0 +1,62 @@

+import asyncio

+import zoneinfo

+from pathlib import Path

+

+from apscheduler.schedulers.asyncio import AsyncIOScheduler

+from apscheduler.triggers.cron import CronTrigger

+

+from astrbot.api import logger

+from astrbot.core.config.astrbot_config import AstrBotConfig

+from astrbot.core.star.context import Context

+

+from .utils import safe_unlink

+

+

+class CacheCleaner:

+ """

+ 每天固定时间自动清理插件缓存目录的调度器封装。

+ """

+ JOBNAME = "CacheCleaner"

+ def __init__(self, context: Context, config: AstrBotConfig):

+ self.clean_cron = config["clean_cron"]

+ self.cache_dir = Path(config["cache_dir"])

+

+ tz = context.get_config().get("timezone")

+ self.timezone = (

+ zoneinfo.ZoneInfo(tz) if tz else zoneinfo.ZoneInfo("Asia/Shanghai")

+ )

+ self.scheduler = AsyncIOScheduler(timezone=self.timezone)

+ self.scheduler.start()

+

+ self.register_task()

+

+ logger.info(f"{self.JOBNAME} 已启动,任务周期:{self.clean_cron}")

+

+ def register_task(self):

+ try:

+ self.trigger = CronTrigger.from_crontab(self.clean_cron)

+ self.scheduler.add_job(

+ func=self._clean_plugin_cache,

+ trigger=self.trigger,

+ name=f"{self.JOBNAME}_scheduler",

+ max_instances=1,

+ )

+ except Exception as e:

+ logger.error(f"[{self.JOBNAME}] Cron 格式错误:{e}")

+

+ async def _clean_plugin_cache(self) -> None:

+ """真正的清理逻辑。"""

+ try:

+ files = [f for f in self.cache_dir.iterdir() if f.is_file()]

+ if not files:

+ logger.info("No cache files to clean.")

+ return

+

+ await asyncio.gather(*(safe_unlink(f) for f in files))

+ logger.info(f"Successfully cleaned {len(files)} cache files.")

+ except Exception:

+ logger.exception("Error while cleaning cache files.")

+

+ async def stop(self):

+ self.scheduler.remove_all_jobs()

+ logger.info(f"[{self.JOBNAME}] 已停止")

diff --git a/core/constants.py b/core/constants.py

new file mode 100644

index 0000000..13574bb

--- /dev/null

+++ b/core/constants.py

@@ -0,0 +1,39 @@

+from enum import Enum

+from typing import Final

+

+COMMON_HEADER: Final[dict[str, str]] = {

+ "User-Agent": (

+ "Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) "

+ "Chrome/55.0.2883.87 UBrowser/6.2.4098.3 Safari/537.36"

+ )

+}

+

+IOS_HEADER: Final[dict[str, str]] = {

+ "User-Agent": (

+ "Mozilla/5.0 (iPhone; CPU iPhone OS 16_6 like Mac OS X) AppleWebKit/605.1.15 (KHTML, like Gecko) "

+ "Version/16.6 Mobile/15E148 Safari/604.1 Edg/132.0.0.0"

+ )

+}

+

+ANDROID_HEADER: Final[dict[str, str]] = {

+ "User-Agent": (

+ "Mozilla/5.0 (Linux; Android 15; SM-G998B) AppleWebKit/537.36 (KHTML, like Gecko) "

+ "Chrome/132.0.0.0 Mobile Safari/537.36 Edg/132.0.0.0"

+ )

+}

+

+

+class PlatformEnum(str, Enum):

+ ACFUN = "acfun"

+ BILIBILI = "bilibili"

+ DOUYIN = "douyin"

+ KUAISHOU = "kuaishou"

+ NGA = "nga"

+ TIKTOK = "tiktok"

+ TWITTER = "twitter"

+ WEIBO = "weibo"

+ XIAOHONGSHU = "xiaohongshu"

+ YOUTUBE = "youtube"

+

+ def __str__(self) -> str:

+ return self.value

diff --git a/core/download.py b/core/download.py

new file mode 100644

index 0000000..269821d

--- /dev/null

+++ b/core/download.py

@@ -0,0 +1,375 @@

+import asyncio

+from asyncio import Task, create_task

+from collections.abc import Callable, Coroutine

+from functools import wraps

+from pathlib import Path

+from typing import Any, ParamSpec, TypeVar

+

+import aiofiles

+import yt_dlp

+from aiohttp import ClientError, ClientSession, ClientTimeout

+from msgspec import Struct, convert

+from tqdm.asyncio import tqdm

+

+from astrbot.api import logger

+from astrbot.core.config.astrbot_config import AstrBotConfig

+

+from .constants import COMMON_HEADER

+from .exception import (

+ DownloadException,

+ DurationLimitException,

+ ParseException,

+ SizeLimitException,

+ ZeroSizeException,

+)

+from .utils import LimitedSizeDict, generate_file_name, merge_av, safe_unlink

+

+P = ParamSpec("P")

+T = TypeVar("T")

+

+

+def auto_task(func: Callable[P, Coroutine[Any, Any, T]]) -> Callable[P, Task[T]]:

+ """装饰器:自动将异步函数调用转换为 Task, 完整保留类型提示"""

+

+ @wraps(func)

+ def wrapper(*args: P.args, **kwargs: P.kwargs) -> Task[T]:

+ coro = func(*args, **kwargs)

+ name = " | ".join(str(arg) for arg in args if isinstance(arg, str))

+ return create_task(coro, name=func.__name__ + " | " + name)

+

+ return wrapper

+

+

+class VideoInfo(Struct):

+ title: str

+ """标题"""

+ channel: str

+ """频道名称"""

+ uploader: str

+ """上传者 id"""

+ duration: int

+ """时长"""

+ timestamp: int

+ """发布时间戳"""

+ thumbnail: str

+ """封面图片"""

+ description: str

+ """简介"""

+ channel_id: str

+ """频道 id"""

+

+ @property

+ def author_name(self) -> str:

+ return f"{self.channel}@{self.uploader}"

+

+

+class Downloader:

+ """下载器,支持youtube-dlp 和 httpx 流式下载"""

+

+ def __init__(self, config: AstrBotConfig):

+ self.config = config

+ self.cache_dir = Path(config["cache_dir"])

+ self.proxy: str | None = self.config["proxy"] or None

+ self.max_duration: int = config["max_duration"]

+ self.max_size = self.config["max_size"]

+ self.headers: dict[str, str] = COMMON_HEADER.copy()

+ # 视频信息缓存

+ self.info_cache: LimitedSizeDict[str, VideoInfo] = LimitedSizeDict()

+ # 用于流式下载的客户端

+ self.client = ClientSession(

+ timeout=ClientTimeout(total=config["download_timeout"])

+ )

+ @auto_task

+ async def streamd(

+ self,

+ url: str,

+ *,

+ file_name: str | None = None,

+ ext_headers: dict[str, str] | None = None,

+ ) -> Path:

+ """download file by url with stream

+

+ Args:

+ url (str): url address

+ file_name (str | None): file name. Defaults to generate_file_name.

+ ext_headers (dict[str, str] | None): ext headers. Defaults to None.

+

+ Returns:

+ Path: file path

+

+ Raises:

+ httpx.HTTPError: When download fails

+ """

+

+ if not file_name:

+ file_name = generate_file_name(url)

+ file_path = self.cache_dir / file_name

+ # 如果文件存在,则直接返回

+ if file_path.exists():

+ return file_path

+

+ headers = {**self.headers, **(ext_headers or {})}

+

+ try:

+ async with self.client.get(

+ url, headers=headers, allow_redirects=True

+ ) as response:

+ if response.status >= 400:

+ raise ClientError(

+ f"HTTP {response.status} {response.reason}"

+ )

+ content_length = response.headers.get("Content-Length")

+ content_length = int(content_length) if content_length else 0

+

+ if content_length == 0:

+ logger.warning(f"媒体 url: {url}, 大小为 0, 取消下载")

+ raise ZeroSizeException

+ if (file_size := content_length / 1024 / 1024) > self.max_size:

+ logger.warning(

+ f"媒体 url: {url} 大小 {file_size:.2f} MB 超过 {self.max_size} MB, 取消下载"

+ )

+ raise SizeLimitException

+

+ with self.get_progress_bar(file_name, content_length) as bar:

+ async with aiofiles.open(file_path, "wb") as file:

+ async for chunk in response.content.iter_chunked(1024 * 1024):

+ await file.write(chunk)

+ bar.update(len(chunk))

+

+ except ClientError:

+ await safe_unlink(file_path)

+ logger.exception(f"下载失败 | url: {url}, file_path: {file_path}")

+ raise DownloadException("媒体下载失败")

+ return file_path

+

+ @staticmethod

+ def get_progress_bar(desc: str, total: int | None = None) -> tqdm:

+ """获取进度条 bar

+

+ Args:

+ desc (str): 描述

+ total (int | None): 总大小. Defaults to None.

+

+ Returns:

+ tqdm: 进度条

+ """

+ return tqdm(

+ total=total,

+ unit="B",

+ unit_scale=True,

+ unit_divisor=1024,

+ dynamic_ncols=True,

+ colour="green",

+ desc=desc,

+ )

+

+ @auto_task

+ async def download_video(

+ self,

+ url: str,

+ *,

+ video_name: str | None = None,

+ ext_headers: dict[str, str] | None = None,

+ use_ytdlp: bool = False,

+ cookiefile: Path | None = None,

+ ) -> Path:

+ """download video file by url with stream

+

+ Args:

+ url (str): url address

+ video_name (str | None): video name. Defaults to get name by parse url.

+ ext_headers (dict[str, str] | None): ext headers. Defaults to None.

+ use_ytdlp (bool): use ytdlp to download video. Defaults to False.

+ cookiefile (Path | None): cookie file path. Defaults to None.

+

+ Returns:

+ Path: video file path

+

+ Raises:

+ httpx.HTTPError: When download fails

+ """

+ if use_ytdlp:

+ return await self._ytdlp_download_video(url, cookiefile)

+

+ if video_name is None:

+ video_name = generate_file_name(url, ".mp4")

+ return await self.streamd(url, file_name=video_name, ext_headers=ext_headers)

+

+ @auto_task

+ async def download_audio(

+ self,

+ url: str,

+ *,

+ audio_name: str | None = None,

+ ext_headers: dict[str, str] | None = None,

+ use_ytdlp: bool = False,

+ cookiefile: Path | None = None,

+ ) -> Path:

+ """download audio file by url with stream

+

+ Args:

+ url (str): url address

+ audio_name (str | None ): audio name. Defaults to generate from url.

+ ext_headers (dict[str, str] | None): ext headers. Defaults to None.

+

+ Returns:

+ Path: audio file path

+

+ Raises:

+ httpx.HTTPError: When download fails

+ """

+ if use_ytdlp:

+ return await self._ytdlp_download_audio(url, cookiefile)

+

+ if audio_name is None:

+ audio_name = generate_file_name(url, ".mp3")

+ return await self.streamd(url, file_name=audio_name, ext_headers=ext_headers)

+

+ @auto_task

+ async def download_img(

+ self,

+ url: str,

+ *,

+ img_name: str | None = None,

+ ext_headers: dict[str, str] | None = None,

+ ) -> Path:

+ """download image file by url with stream

+

+ Args:

+ url (str): url

+ img_name (str | None): image name. Defaults to generate from url.

+ ext_headers (dict[str, str] | None): ext headers. Defaults to None.

+

+ Returns:

+ Path: image file path

+

+ Raises:

+ httpx.HTTPError: When download fails

+ """

+ if img_name is None:

+ img_name = generate_file_name(url, ".jpg")

+ return await self.streamd(url, file_name=img_name, ext_headers=ext_headers)

+

+ async def download_imgs_without_raise(

+ self,

+ urls: list[str],

+ *,

+ ext_headers: dict[str, str] | None = None,

+ ) -> list[Path]:

+ """download images without raise

+

+ Args:

+ urls (list[str]): urls

+ ext_headers (dict[str, str] | None): ext headers. Defaults to None.

+

+ Returns:

+ list[Path]: image file paths

+ """

+ paths_or_errs = await asyncio.gather(

+ *[self.download_img(url, ext_headers=ext_headers) for url in urls],

+ return_exceptions=True,

+ )

+ return [p for p in paths_or_errs if isinstance(p, Path)]

+

+ @auto_task

+ async def download_av_and_merge(

+ self,

+ v_url: str,

+ a_url: str,

+ *,

+ output_path: Path,

+ ext_headers: dict[str, str] | None = None,

+ ) -> Path:

+ """download video and audio file by url with stream and merge"""

+ v_path, a_path = await asyncio.gather(

+ self.download_video(v_url, ext_headers=ext_headers),

+ self.download_audio(a_url, ext_headers=ext_headers),

+ )

+ await merge_av(v_path=v_path, a_path=a_path, output_path=output_path)

+ return output_path

+

+ # region -------------------- 私有:yt-dlp --------------------

+

+ async def ytdlp_extract_info(

+ self, url: str, cookiefile: Path | None = None

+ ) -> VideoInfo:

+ if (info := self.info_cache.get(url)) is not None:

+ return info

+ opts = {

+ "quiet": True,

+ "skip_download": True,

+ "force_generic_extractor": True,

+ "cookiefile": None,

+ }

+ if self.proxy:

+ opts["proxy"] = self.proxy

+ if cookiefile and cookiefile.is_file():

+ opts["cookiefile"] = str(cookiefile)

+ with yt_dlp.YoutubeDL(opts) as ydl:

+ raw = await asyncio.to_thread(ydl.extract_info, url, download=False)

+ if not raw:

+ raise ParseException("获取视频信息失败")

+ info = convert(raw, VideoInfo)

+ self.info_cache[url] = info

+ return info

+

+ async def _ytdlp_download_video(

+ self, url: str, cookiefile: Path | None = None

+ ) -> Path:

+ info = await self.ytdlp_extract_info(url, cookiefile)

+ if info.duration > self.max_duration:

+ raise DurationLimitException

+

+ video_path = self.cache_dir / generate_file_name(url, ".mp4")

+ if video_path.exists():

+ return video_path

+

+ opts = {

+ "outtmpl": str(video_path),

+ "merge_output_format": "mp4",

+ # "format": f"bv[filesize<={info.duration // 10 + 10}M]+ba/b[filesize<={info.duration // 8 + 10}M]",

+ "format": "best[height<=720]/bestvideo[height<=720]+bestaudio/best",

+ "postprocessors": [

+ {"key": "FFmpegVideoConvertor", "preferedformat": "mp4"}

+ ],

+ "cookiefile": None,

+ }

+ if self.proxy:

+ opts["proxy"] = self.proxy

+ if cookiefile and cookiefile.is_file():

+ opts["cookiefile"] = str(cookiefile)

+

+ with yt_dlp.YoutubeDL(opts) as ydl:

+ await asyncio.to_thread(ydl.download, [url])

+ return video_path

+

+ async def _ytdlp_download_audio(self, url: str, cookiefile: Path | None) -> Path:

+ file_name = generate_file_name(url)

+ audio_path = self.cache_dir / f"{file_name}.flac"

+ if audio_path.exists():

+ return audio_path

+

+ opts = {

+ "outtmpl": str(self.cache_dir / file_name) + ".%(ext)s",

+ "format": "bestaudio/best",

+ "postprocessors": [

+ {

+ "key": "FFmpegExtractAudio",

+ "preferredcodec": "flac",

+ "preferredquality": "0",

+ }

+ ],

+ "cookiefile": None,

+ }

+ if self.proxy:

+ opts["proxy"] = self.proxy

+ if cookiefile and cookiefile.is_file():

+ opts["cookiefile"] = str(cookiefile)

+

+ with yt_dlp.YoutubeDL(opts) as ydl:

+ await asyncio.to_thread(ydl.download, [url])

+ return audio_path

+

+ async def close(self):

+ """关闭网络客户端"""

+ await self.client.close()

diff --git a/core/exception.py b/core/exception.py

new file mode 100644

index 0000000..31a9549

--- /dev/null

+++ b/core/exception.py

@@ -0,0 +1,46 @@

+class ParseException(Exception):

+ """异常基类"""

+

+ def __init__(self, message: str):

+ super().__init__(message)

+ self.message = message

+

+

+class TipException(ParseException):

+ """提示异常"""

+

+ pass

+

+

+class DownloadException(ParseException):

+ """下载异常"""

+

+ def __init__(self, message: str | None = None):

+ super().__init__(message or "媒体下载失败")

+

+

+class DownloadLimitException(DownloadException):

+ """下载超过限制异常"""

+

+ pass

+

+

+class SizeLimitException(DownloadLimitException):

+ """下载大小超过限制异常"""

+

+ def __init__(self):

+ super().__init__("媒体大小超过配置限制,取消下载")

+

+

+class DurationLimitException(DownloadLimitException):

+ """下载时长超过限制异常"""

+

+ def __init__(self):

+ super().__init__("媒体时长超过配置限制,取消下载")

+

+

+class ZeroSizeException(DownloadException):

+ """下载大小为 0 异常"""

+

+ def __init__(self):

+ super().__init__("媒体大小为 0, 取消下载")

diff --git a/core/parsers/__init__.py b/core/parsers/__init__.py

new file mode 100644

index 0000000..cdb3ceb

--- /dev/null

+++ b/core/parsers/__init__.py

@@ -0,0 +1,48 @@

+

+from .acfun import AcfunParser

+from .base import BaseParser, handle

+from .bilibili import BilibiliParser

+from .data import (

+ AudioContent,

+ Author,

+ DynamicContent,

+ GraphicsContent,

+ ImageContent,

+ ParseResult,

+ Platform,

+ VideoContent,

+)

+from .douyin import DouyinParser

+from .kuaishou import KuaiShouParser

+from .nga import NGAParser

+from .tiktok import TikTokParser

+from .twitter import TwitterParser

+from .weibo import WeiBoParser

+from .xiaohongshu import XiaoHongShuParser

+from .youtube import YouTubeParser

+

+__all__ = [

+ # 数据模型

+ "AudioContent",

+ "Author",

+ "DynamicContent",

+ "GraphicsContent",

+ "ImageContent",

+ "ParseResult",

+ "Platform",

+ "VideoContent",

+ # 基础组件

+ "BaseParser",

+ "handle",

+ # 各平台 Parser

+ "AcfunParser",

+ "BilibiliParser",

+ "DouyinParser",

+ "KuaiShouParser",

+ "NGAParser",

+ "TikTokParser",

+ "TwitterParser",

+ "WeiBoParser",

+ "XiaoHongShuParser",

+ "YouTubeParser",

+]

diff --git a/core/parsers/acfun.py b/core/parsers/acfun.py

new file mode 100644

index 0000000..fb0ff82

--- /dev/null

+++ b/core/parsers/acfun.py

@@ -0,0 +1,161 @@

+import asyncio

+import json

+import re

+import time

+from pathlib import Path

+from typing import ClassVar

+

+import aiofiles

+from aiohttp import ClientError

+

+from astrbot.api import logger

+from astrbot.core.config.astrbot_config import AstrBotConfig

+

+from ..download import Downloader

+from ..exception import DownloadException, ParseException

+from ..utils import safe_unlink

+from .base import BaseParser, Platform, PlatformEnum, handle

+

+

+class AcfunParser(BaseParser):

+ # 平台信息

+ platform: ClassVar[Platform] = Platform(name=PlatformEnum.ACFUN, display_name="A站")

+

+ def __init__(self, config: AstrBotConfig, downloader: Downloader):

+ super().__init__(config, downloader)

+ self.headers["referer"] = "https://www.acfun.cn/"

+ self.cache_dir = Path(config["cache_dir"])

+ self.max_size = self.config["max_size"]

+

+ @handle("acfun.cn", r"(?:ac=|/ac)(?P\d+)")

+ async def _parse(self, searched: re.Match[str]):

+ acid = int(searched.group("acid"))

+ url = f"https://www.acfun.cn/v/ac{acid}"

+

+ m3u8_url, title, description, author, upload_time = await self.parse_video_info(url)

+ author = self.create_author(author) if author else None

+

+ # 2024-12-1 -> timestamp

+ try:

+ timestamp = int(time.mktime(time.strptime(upload_time, "%Y-%m-%d")))

+ except ValueError:

+ timestamp = None

+ text = f"简介: {description}"

+

+ # 下载视频

+ video_task = asyncio.create_task(self.download_video(m3u8_url, acid))

+

+ return self.result(

+ title=title,

+ text=text,

+ author=author,

+ timestamp=timestamp,

+ contents=[self.create_video_content(video_task)],

+ )

+

+ async def parse_video_info(self, url: str) -> tuple[str, str, str, str, str]:

+ """解析acfun链接获取详细信息

+

+ Args:

+ url (str): 链接

+

+ Returns:

+ tuple: (m3u8_url, title, description, author, upload_time)

+ """

+

+ # 拼接查询参数

+ url = f"{url}?quickViewId=videoInfo_new&ajaxpipe=1"

+

+ async with self.client.get(url, headers=self.headers) as resp:

+ if resp.status >= 400:

+ raise ClientError(f"HTTP {resp.status}")

+ raw = await resp.text()

+

+ matched = re.search(r"window\.videoInfo =(.*?)", raw)

+ if not matched:

+ raise ParseException("解析 acfun 视频信息失败")

+ json_str = str(matched.group(1))

+ json_str = json_str.replace('\\\\"', '\\"').replace('\\"', '"')

+ video_info = json.loads(json_str)

+

+ title = video_info.get("title", "")

+ description = video_info.get("description", "")

+ author = video_info.get("user", {}).get("name", "")

+ upload_time = video_info.get("createTime", "")

+

+ ks_play_json = video_info["currentVideoInfo"]["ksPlayJson"]

+ ks_play = json.loads(ks_play_json)

+ representations = ks_play["adaptationSet"][0]["representation"]

+ # 这里[d['url'] for d in representations],从 4k ~ 360,此处默认720p

+ m3u8_url = [d["url"] for d in representations][3]

+

+ return m3u8_url, title, description, author, upload_time

+

+ async def download_video(self, m3u8s_url: str, acid: int) -> Path:

+ """下载acfun视频

+

+ Args:

+ m3u8s_url (str): m3u8链接

+ acid (int): acid

+

+ Returns:

+ Path: 下载的mp4文件

+ """

+

+ m3u8_full_urls = await self._parse_m3u8(m3u8s_url)

+ video_file = self.cache_dir / f"acfun_{acid}.mp4"

+ if video_file.exists():

+ return video_file

+

+ max_size = self.max_size * 1024 * 1024

+

+ try:

+ async with aiofiles.open(video_file, "wb") as f:

+ with self.downloader.get_progress_bar(video_file.name) as bar:

+ total = 0

+ for url in m3u8_full_urls:

+ async with self.client.get(url, headers=self.headers) as resp:

+ if resp.status >= 400:

+ raise ClientError(f"{resp.status} {resp.reason}")

+ async for chunk in resp.content.iter_chunked(1024 * 1024):

+ await f.write(chunk)

+ total += len(chunk)

+ bar.update(len(chunk))

+ if total > max_size: # 大小截断

+ break

+ if total > max_size:

+ break

+

+ except ClientError:

+ await safe_unlink(video_file)

+ logger.exception("视频下载失败")

+ raise DownloadException("视频下载失败")

+ return video_file

+

+ async def _parse_m3u8(self, m3u8_url: str):

+ """解析m3u8链接

+

+ Args:

+ m3u8_url (str): m3u8链接

+

+ Returns:

+ list[str]: 视频链接

+ """

+ async with self.client.get(m3u8_url, headers=self.headers) as resp:

+ if resp.status >= 400:

+ raise ClientError(f"{resp.status} {resp.reason}")

+ m3u8_file = await resp.text()

+ # 分离ts文件链接

+ raw_pieces = re.split(r"\n#EXTINF:.{8},\n", m3u8_file)

+ # 过滤头部\

+ m3u8_relative_links = raw_pieces[1:]

+

+ # 修改尾部 去掉尾部多余的结束符

+ patched_tail = m3u8_relative_links[-1].split("\n")[0]

+ m3u8_relative_links[-1] = patched_tail

+

+ # 完整链接,直接加 m3u8Url 的通用前缀

+ m3u8_prefix = "/".join(m3u8_url.split("/")[0:-1])

+ m3u8_full_urls = [f"{m3u8_prefix}/{d}" for d in m3u8_relative_links]

+

+ return m3u8_full_urls

diff --git a/core/parsers/base.py b/core/parsers/base.py

new file mode 100644

index 0000000..3a6d9a6

--- /dev/null

+++ b/core/parsers/base.py

@@ -0,0 +1,287 @@

+"""Parser 基类定义"""

+

+from abc import ABC

+from asyncio import Task

+from collections.abc import Callable, Coroutine

+from pathlib import Path

+from re import Match, Pattern, compile

+from typing import TYPE_CHECKING, Any, ClassVar, TypeVar, cast

+

+from aiohttp import ClientError, ClientSession, ClientTimeout, TCPConnector

+from typing_extensions import Unpack

+

+from astrbot.core.config.astrbot_config import AstrBotConfig

+

+from ..constants import ANDROID_HEADER, COMMON_HEADER, IOS_HEADER

+from ..constants import PlatformEnum as PlatformEnum

+from ..download import Downloader

+from ..exception import DownloadException as DownloadException

+from ..exception import DurationLimitException as DurationLimitException

+from ..exception import ParseException as ParseException

+from ..exception import SizeLimitException as SizeLimitException

+from ..exception import TipException as TipException

+from ..exception import ZeroSizeException as ZeroSizeException

+from .data import ParseResult, ParseResultKwargs, Platform

+

+T = TypeVar("T", bound="BaseParser")

+HandlerFunc = Callable[[T, Match[str]], Coroutine[Any, Any, ParseResult]]

+KeyPatterns = list[tuple[str, Pattern[str]]]

+

+_KEY_PATTERNS = "_key_patterns"

+

+

+# 注册处理器装饰器

+def handle(keyword: str, pattern: str):

+ """注册处理器装饰器"""

+

+ def decorator(func: HandlerFunc[T]) -> HandlerFunc[T]:

+ if not hasattr(func, _KEY_PATTERNS):

+ setattr(func, _KEY_PATTERNS, [])

+

+ key_patterns: KeyPatterns = getattr(func, _KEY_PATTERNS)

+ key_patterns.append((keyword, compile(pattern)))

+

+ return func

+

+ return decorator

+

+

+class BaseParser:

+ """所有平台 Parser 的抽象基类

+

+ 子类必须实现:

+ - platform: 平台信息(包含名称和显示名称)

+ """

+

+ _registry: ClassVar[list[type["BaseParser"]]] = []

+ """ 存储所有已注册的 Parser 类 """

+

+ platform: ClassVar[Platform]

+ """ 平台信息(包含名称和显示名称) """

+

+ _session: ClassVar[ClientSession | None] = None

+ """ 全局 ClientSession 对象 """

+

+ if TYPE_CHECKING:

+ _key_patterns: ClassVar[KeyPatterns]

+ _handlers: ClassVar[dict[str, HandlerFunc]]

+

+ def __init__(

+ self,

+ config: AstrBotConfig,

+ downloader: Downloader,

+ ):

+ self.headers = COMMON_HEADER.copy()

+ self.ios_headers = IOS_HEADER.copy()

+ self.android_headers = ANDROID_HEADER.copy()

+ self.config = config

+ self.downloader = downloader

+ self.client = self.get_session(config["common_timeout"])

+

+ def __init_subclass__(cls, **kwargs):

+ """自动注册子类到 _registry"""

+ super().__init_subclass__(**kwargs)

+ if ABC not in cls.__bases__: # 跳过抽象类

+ BaseParser._registry.append(cls)

+

+ cls._handlers = {}

+ cls._key_patterns = []

+

+ # 获取所有被 handle 装饰的方法

+ for attr_name in dir(cls):

+ attr = getattr(cls, attr_name)

+ if callable(attr) and hasattr(attr, _KEY_PATTERNS):

+ key_patterns: KeyPatterns = getattr(attr, _KEY_PATTERNS)

+ handler = cast(HandlerFunc, attr)

+ for keyword, pattern in key_patterns:

+ cls._handlers[keyword] = handler

+ cls._key_patterns.append((keyword, pattern))

+

+ # 按关键字长度降序排序

+ cls._key_patterns.sort(key=lambda x: -len(x[0]))

+

+ @classmethod

+ def get_all_subclass(cls) -> list[type["BaseParser"]]:

+ """获取所有已注册的 Parser 类"""

+ return cls._registry

+

+ @classmethod

+ def get_session(cls, timeout: float = 30) -> ClientSession:

+ """取全局单例,首次调用时创建"""

+ if cls._session is None or cls._session.closed:

+ cls._session = ClientSession(

+ connector=TCPConnector(ssl=False),

+ timeout=ClientTimeout(total=timeout),

+ )

+ return cls._session

+

+ @classmethod

+ async def close_session(cls) -> None:

+ """关闭全局单例,插件卸载时调用一次即可"""

+ if cls._session and not cls._session.closed:

+ await cls._session.close()

+ cls._session = None

+

+ async def parse(self, keyword: str, searched: Match[str]) -> ParseResult:

+ """解析 URL 提取信息

+

+ Args:

+ keyword: 关键词

+ searched: 正则表达式匹配对象,由平台对应的模式匹配得到

+

+ Returns:

+ ParseResult: 解析结果

+

+ Raises:

+ ParseException: 解析失败时抛出

+ """

+ return await self._handlers[keyword](self, searched)

+

+ async def parse_with_redirect(

+ self,

+ url: str,

+ headers: dict[str, str] | None = None,

+ ) -> ParseResult:

+ """先重定向再解析"""

+ redirect_url = await self.get_redirect_url(url, headers=headers or self.headers)

+

+ if redirect_url == url:

+ raise ParseException(f"无法重定向 URL: {url}")

+

+ keyword, searched = self.search_url(redirect_url)

+ return await self.parse(keyword, searched)

+

+ @classmethod

+ def search_url(cls, url: str) -> tuple[str, Match[str]]:

+ """搜索 URL 匹配模式"""

+ for keyword, pattern in cls._key_patterns:

+ if keyword not in url:

+ continue

+ if searched := pattern.search(url):

+ return keyword, searched

+ raise ParseException(f"无法匹配 {url}")

+

+ @classmethod

+ def result(cls, **kwargs: Unpack[ParseResultKwargs]) -> ParseResult:

+ """构建解析结果"""

+ return ParseResult(platform=cls.platform, **kwargs)

+

+

+ async def get_redirect_url(

+ self,

+ url: str,

+ headers: dict[str, str] | None = None,

+ ) -> str:

+ """获取重定向后的 URL, 单次重定向"""

+

+ headers = headers or COMMON_HEADER.copy()

+ async with self.client.get(url, headers=headers, allow_redirects=False) as resp:

+ if resp.status >= 400:

+ raise ClientError(f"redirect check {resp.status} {resp.reason}")

+ return resp.headers.get("Location", url)

+

+ async def get_final_url(

+ self,

+ url: str,

+ headers: dict[str, str] | None = None,

+ ) -> str:

+ """获取重定向后的 URL, 允许多次重定向"""

+ headers = headers or COMMON_HEADER.copy()

+ async with self.client.get(

+ url, headers=headers, allow_redirects=True

+ ) as resp:

+ if resp.status >= 400:

+ raise ClientError(f"final url check {resp.status} {resp.reason}")

+ return str(resp.url)

+

+ def create_author(

+ self,

+ name: str,

+ avatar_url: str | None = None,

+ description: str | None = None,

+ ):

+ """创建作者对象"""

+ from .data import Author

+

+ avatar_task = None

+ if avatar_url:

+ avatar_task = self.downloader.download_img(

+ avatar_url, ext_headers=self.headers

+ )

+ return Author(name=name, avatar=avatar_task, description=description)

+

+ def create_video_content(

+ self,

+ url_or_task: str | Task[Path],

+ cover_url: str | None = None,

+ duration: float = 0.0,

+ ):

+ """创建视频内容"""

+ from .data import VideoContent

+

+ cover_task = None

+ if cover_url:

+ cover_task = self.downloader.download_img(

+ cover_url, ext_headers=self.headers

+ )

+ if isinstance(url_or_task, str):

+ url_or_task = self.downloader.download_video(

+ url_or_task, ext_headers=self.headers

+ )

+

+ return VideoContent(url_or_task, cover_task, duration)

+

+ def create_image_contents(

+ self,

+ image_urls: list[str],

+ ):

+ """创建图片内容列表"""

+ from .data import ImageContent

+

+ contents: list[ImageContent] = []

+ for url in image_urls:

+ task = self.downloader.download_img(url, ext_headers=self.headers)

+ contents.append(ImageContent(task))

+ return contents

+

+ def create_dynamic_contents(

+ self,

+ dynamic_urls: list[str],

+ ):

+ """创建动态图片内容列表"""

+ from .data import DynamicContent

+

+ contents: list[DynamicContent] = []

+ for url in dynamic_urls:

+ task = self.downloader.download_video(url, ext_headers=self.headers)

+ contents.append(DynamicContent(task))

+ return contents

+

+ def create_audio_content(

+ self,

+ url_or_task: str | Task[Path],

+ duration: float = 0.0,

+ ):

+ """创建音频内容"""

+ from .data import AudioContent

+

+ if isinstance(url_or_task, str):

+ url_or_task = self.downloader.download_audio(

+ url_or_task, ext_headers=self.headers

+ )

+

+ return AudioContent(url_or_task, duration)

+

+ def create_graphics_content(

+ self,

+ image_url: str,

+ text: str | None = None,

+ alt: str | None = None,

+ ):

+ """创建图文内容 图片不能为空 文字可空 渲染时文字在前 图片在后"""

+ from .data import GraphicsContent

+

+ image_task = self.downloader.download_img(image_url, ext_headers=self.headers)

+ return GraphicsContent(image_task, text, alt)

+

+

diff --git a/core/parsers/bilibili/__init__.py b/core/parsers/bilibili/__init__.py

new file mode 100644

index 0000000..44c55e0

--- /dev/null

+++ b/core/parsers/bilibili/__init__.py

@@ -0,0 +1,524 @@

+import asyncio

+import json

+from collections.abc import AsyncGenerator

+from pathlib import Path

+from re import Match

+from typing import ClassVar

+

+from bilibili_api import HEADERS, Credential, request_settings, select_client

+from bilibili_api.login_v2 import QrCodeLogin, QrCodeLoginEvents

+from bilibili_api.opus import Opus

+from bilibili_api.video import Video, VideoCodecs, VideoQuality

+from msgspec import convert

+

+from astrbot.api import logger

+from astrbot.core.config.astrbot_config import AstrBotConfig

+

+from ...utils import ck2dict

+from ..base import (

+ BaseParser,

+ Downloader,

+ DownloadException,

+ DurationLimitException,

+ ParseException,

+ PlatformEnum,

+ handle,

+)

+from ..data import ImageContent, MediaContent, Platform

+

+# 选择客户端

+select_client("curl_cffi")

+# 模拟浏览器,第二参数数值参考 curl_cffi 文档

+# https://curl-cffi.readthedocs.io/en/latest/impersonate.html

+request_settings.set("impersonate", "chrome131")

+

+

+class BilibiliParser(BaseParser):

+ # 平台信息

+ platform: ClassVar[Platform] = Platform(name=PlatformEnum.BILIBILI, display_name="B站")

+

+ def __init__(self, config: AstrBotConfig, downloader: Downloader):

+ super().__init__(config, downloader)

+ self.headers = HEADERS.copy()

+ self._credential: Credential | None = None

+ self.max_duration = config["max_duration"]

+ self.cache_dir = Path(config["cache_dir"])

+

+ self.video_quality = getattr(

+ VideoQuality, config["bili_video_quality"].upper(), VideoQuality._720P

+ )

+ self.codecs = getattr(

+ VideoCodecs, config["bili_video_codecs"].upper(), VideoCodecs.AVC

+ )

+ self.bili_ck = config["bili_ck"]

+ self._cookies_file = Path(config["data_dir"]) / "bilibili_cookies.json"

+

+ @handle("b23.tv", r"b23\.tv/[A-Za-z\d\._?%&+\-=/#]+")

+ @handle("bili2233", r"bili2233\.cn/[A-Za-z\d\._?%&+\-=/#]+")

+ async def _parse_short_link(self, searched: Match[str]):

+ """解析短链"""

+ url = f"https://{searched.group(0)}"

+ return await self.parse_with_redirect(url)

+

+ @handle("BV", r"^(?PBV[0-9a-zA-Z]{10})(?:\s)?(?P\d{1,3})?$")

+ @handle("/BV", r"bilibili\.com(?:/video)?/(?PBV[0-9a-zA-Z]{10})(?:\?p=(?P\d{1,3}))?")

+ async def _parse_bv(self, searched: Match[str]):

+ """解析视频信息"""

+ bvid = str(searched.group("bvid"))

+ page_num = int(searched.group("page_num") or 1)

+

+ return await self.parse_video(bvid=bvid, page_num=page_num)

+

+ @handle("av", r"^av(?P\d{6,})(?:\s)?(?P\d{1,3})?$")

+ @handle("/av", r"bilibili\.com(?:/video)?/av(?P\d{6,})(?:\?p=(?P\d{1,3}))?")

+ async def _parse_av(self, searched: Match[str]):

+ """解析视频信息"""

+ avid = int(searched.group("avid"))

+ page_num = int(searched.group("page_num") or 1)

+

+ return await self.parse_video(avid=avid, page_num=page_num)

+

+ @handle("/dynamic/", r"bilibili\.com/dynamic/(?P\d+)")

+ @handle("t.bili", r"t\.bilibili\.com/(?P\d+)")

+ async def _parse_dynamic(self, searched: Match[str]):

+ """解析动态信息"""

+ dynamic_id = int(searched.group("dynamic_id"))

+ return await self.parse_dynamic(dynamic_id)

+

+ @handle("live.bili", r"live\.bilibili\.com/(?P\d+)")

+ async def _parse_live(self, searched: Match[str]):

+ """解析直播信息"""

+ room_id = int(searched.group("room_id"))

+ return await self.parse_live(room_id)

+

+ @handle("/favlist", r"favlist\?fid=(?P\d+)")

+ async def _parse_favlist(self, searched: Match[str]):

+ """解析收藏夹信息"""

+ fav_id = int(searched.group("fav_id"))

+ return await self.parse_favlist(fav_id)

+

+ @handle("/read/", r"bilibili\.com/read/cv(?P\d+)")

+ async def _parse_read(self, searched: Match[str]):

+ """解析专栏信息"""

+ read_id = int(searched.group("read_id"))

+ return await self.parse_read(read_id)

+

+ @handle("/opus/", r"bilibili\.com/opus/(?P\d+)")

+ async def _parse_opus(self, searched: Match[str]):

+ """解析图文动态信息"""

+ opus_id = int(searched.group("opus_id"))

+ return await self.parse_opus(opus_id)

+

+ async def parse_video(

+ self,

+ *,

+ bvid: str | None = None,

+ avid: int | None = None,

+ page_num: int = 1,

+ ):

+ """解析视频信息

+

+ Args:

+ bvid (str | None): bvid

+ avid (int | None): avid

+ page_num (int): 页码

+ """

+

+ from .video import AIConclusion, VideoInfo

+

+ video = await self._get_video(bvid=bvid, avid=avid)

+ # 转换为 msgspec struct

+ video_info = convert(await video.get_info(), VideoInfo)

+ # 获取简介

+ text = f"简介: {video_info.desc}" if video_info.desc else None

+ # up

+ author = self.create_author(video_info.owner.name, video_info.owner.face)

+ # 处理分 p

+ page_info = video_info.extract_info_with_page(page_num)

+

+ # 获取 AI 总结

+ if self._credential:

+ cid = await video.get_cid(page_info.index)

+ ai_conclusion = await video.get_ai_conclusion(cid)

+ ai_conclusion = convert(ai_conclusion, AIConclusion)

+ ai_summary = ai_conclusion.summary

+ else:

+ ai_summary: str = "哔哩哔哩 cookie 未配置或失效, 无法使用 AI 总结"

+

+ url = f"https://bilibili.com/{video_info.bvid}"

+ url += f"?p={page_info.index + 1}" if page_info.index > 0 else ""

+

+ # 视频下载 task

+ async def download_video():

+ output_path = self.cache_dir / f"{video_info.bvid}-{page_num}.mp4"

+ if output_path.exists():

+ return output_path

+ v_url, a_url = await self.extract_download_urls(video=video, page_index=page_info.index)

+ if page_info.duration > self.max_duration:

+ raise DurationLimitException

+ if a_url is not None:

+ return await self.downloader.download_av_and_merge(

+ v_url, a_url, output_path=output_path, ext_headers=self.headers

+ )

+ else:

+ return await self.downloader.streamd(

+ v_url, file_name=output_path.name, ext_headers=self.headers

+ )

+

+ video_task = asyncio.create_task(download_video())

+ video_content = self.create_video_content(

+ video_task,

+ page_info.cover,

+ page_info.duration,

+ )

+

+ return self.result(

+ url=url,

+ title=page_info.title,

+ timestamp=page_info.timestamp,

+ text=text,

+ author=author,

+ contents=[video_content],

+ extra={"info": ai_summary},

+ )

+

+ async def parse_dynamic(self, dynamic_id: int):

+ """解析动态信息

+

+ Args:

+ url (str): 动态链接

+ """

+ from bilibili_api.dynamic import Dynamic

+

+ from .dynamic import DynamicItem

+

+ dynamic = Dynamic(dynamic_id, await self.credential)

+

+ # 转换为结构体

+ dynamic_data = convert(await dynamic.get_info(), DynamicItem)

+ dynamic_info = dynamic_data.item

+ # 使用结构体属性提取信息

+ author = self.create_author(dynamic_info.name, dynamic_info.avatar)

+

+ # 下载图片

+ contents: list[MediaContent] = []

+ for image_url in dynamic_info.image_urls:

+ img_task = self.downloader.download_img(image_url, ext_headers=self.headers)

+ contents.append(ImageContent(img_task))

+

+ return self.result(

+ title=dynamic_info.title,

+ text=dynamic_info.text,

+ timestamp=dynamic_info.timestamp,

+ author=author,

+ contents=contents,

+ )

+

+ async def parse_opus(self, opus_id: int):

+ """解析图文动态信息

+

+ Args:

+ opus_id (int): 图文动态 id

+ """

+ opus = Opus(opus_id, await self.credential)

+ return await self._parse_opus_obj(opus)

+

+ async def parse_read_old(self, read_id: int):

+ """解析专栏信息, 已废弃

+

+ Args:

+ read_id (int): 专栏 id

+ """

+ from bilibili_api.article import Article

+

+ article = Article(read_id)

+ return await self._parse_opus_obj(await article.turn_to_opus())

+

+ async def _parse_opus_obj(self, bili_opus: Opus):

+ """解析图文动态信息

+

+ Args:

+ opus_id (int): 图文动态 id

+

+ Returns:

+ ParseResult: 解析结果

+ """

+

+ from .opus import ImageNode, OpusItem, TextNode

+

+ opus_info = await bili_opus.get_info()

+ if not isinstance(opus_info, dict):

+ raise ParseException("获取图文动态信息失败")

+ # 转换为结构体

+ opus_data = convert(opus_info, OpusItem)

+ logger.debug(f"opus_data: {opus_data}")

+ author = self.create_author(*opus_data.name_avatar)

+

+ # 按顺序处理图文内容(参考 parse_read 的逻辑)

+ contents: list[MediaContent] = []

+ current_text = ""

+

+ for node in opus_data.gen_text_img():

+ if isinstance(node, ImageNode):

+ contents.append(self.create_graphics_content(node.url, current_text.strip(), node.alt))

+ current_text = ""

+ elif isinstance(node, TextNode):

+ current_text += node.text

+

+ return self.result(

+ title=opus_data.title,

+ author=author,

+ timestamp=opus_data.timestamp,

+ contents=contents,

+ text=current_text.strip(),

+ )

+

+ async def parse_live(self, room_id: int):

+ """解析直播信息

+

+ Args:

+ room_id (int): 直播 id

+

+ Returns:

+ ParseResult: 解析结果

+ """

+ from bilibili_api.live import LiveRoom

+

+ from .live import RoomData

+

+ room = LiveRoom(room_display_id=room_id, credential=await self.credential)

+ info_dict = await room.get_room_info()

+

+ room_data = convert(info_dict, RoomData)

+ contents: list[MediaContent] = []

+ # 下载封面

+ if cover := room_data.cover:

+ cover_task = self.downloader.download_img(cover, ext_headers=self.headers)

+ contents.append(ImageContent(cover_task))

+

+ # 下载关键帧

+ if keyframe := room_data.keyframe:

+ keyframe_task = self.downloader.download_img(

+ keyframe, ext_headers=self.headers

+ )

+ contents.append(ImageContent(keyframe_task))

+

+ author = self.create_author(room_data.name, room_data.avatar)

+

+ url = f"https://www.bilibili.com/blackboard/live/live-activity-player.html?enterTheRoom=0&cid={room_id}"

+ return self.result(

+ url=url,

+ title=room_data.title,

+ text=room_data.detail,

+ contents=contents,

+ author=author,

+ )

+

+ async def parse_read(self, read_id: int):

+ """专栏解析

+

+ Args:

+ read_id (int): 专栏 id

+

+ Returns:

+ texts: list[str], urls: list[str]

+ """

+ from bilibili_api.article import Article

+

+ from .article import ArticleInfo, ImageNode, TextNode

+

+ ar = Article(read_id)

+ # 加载内容

+ await ar.fetch_content()

+ data = ar.json()

+ article_info = convert(data, ArticleInfo)

+ logger.debug(f"article_info: {article_info}")

+

+ contents: list[MediaContent] = []

+ current_text = ""

+ for child in article_info.gen_text_img():

+ if isinstance(child, ImageNode):

+ contents.append(self.create_graphics_content(child.url, current_text.strip(), child.alt))

+ current_text = ""

+ elif isinstance(child, TextNode):

+ current_text += child.text

+

+ author = self.create_author(*article_info.author_info)

+

+ return self.result(

+ title=article_info.title,

+ timestamp=article_info.timestamp,

+ text=current_text.strip(),

+ author=author,

+ contents=contents,

+ )

+

+ async def parse_favlist(self, fav_id: int):

+ """解析收藏夹信息

+

+ Args:

+ fav_id (int): 收藏夹 id

+

+ Returns:

+ list[GraphicsContent]: 图文内容列表

+ """

+ from bilibili_api.favorite_list import get_video_favorite_list_content

+

+ from .favlist import FavData

+

+ # 只会取一页,20 个

+ fav_dict = await get_video_favorite_list_content(fav_id)

+

+ if fav_dict["medias"] is None:

+ raise ParseException("收藏夹内容为空, 或被风控")

+

+ favdata = convert(fav_dict, FavData)

+

+ return self.result(

+ title=favdata.title,

+ timestamp=favdata.timestamp,

+ author=self.create_author(favdata.info.upper.name, favdata.info.upper.face),

+ contents=[self.create_graphics_content(fav.cover, fav.desc) for fav in favdata.medias],

+ )

+

+ async def _get_video(self, *, bvid: str | None = None, avid: int | None = None) -> Video:

+ """解析视频信息

+

+ Args:

+ bvid (str | None): bvid

+ avid (int | None): avid

+ """

+ if avid:

+ return Video(aid=avid, credential=await self.credential)

+ elif bvid:

+ return Video(bvid=bvid, credential=await self.credential)

+ else:

+ raise ParseException("avid 和 bvid 至少指定一项")

+

+ async def extract_download_urls(

+ self,

+ video: Video | None = None,

+ *,

+ bvid: str | None = None,

+ avid: int | None = None,

+ page_index: int = 0,

+ ) -> tuple[str, str | None]:

+ """解析视频下载链接

+

+ Args:

+ bvid (str | None): bvid

+ avid (int | None): avid

+ page_index (int): 页索引 = 页码 - 1

+ """

+

+ from bilibili_api.video import (

+ AudioStreamDownloadURL,

+ VideoDownloadURLDataDetecter,

+ VideoStreamDownloadURL,

+ )

+

+ if video is None:

+ video = await self._get_video(bvid=bvid, avid=avid)

+

+ # 获取下载数据

+ download_url_data = await video.get_download_url(page_index=page_index)

+ detecter = VideoDownloadURLDataDetecter(download_url_data)

+ streams = detecter.detect_best_streams(

+ video_max_quality=self.video_quality,

+ codecs=[self.codecs],

+ no_dolby_video=True,

+ no_hdr=True,

+ )

+ video_stream = streams[0]

+ if not isinstance(video_stream, VideoStreamDownloadURL):

+ raise DownloadException("未找到可下载的视频流")

+ logger.debug(f"视频流质量: {video_stream.video_quality.name}, 编码: {video_stream.video_codecs}")

+

+ audio_stream = streams[1]

+ if not isinstance(audio_stream, AudioStreamDownloadURL):

+ return video_stream.url, None

+ logger.debug(f"音频流质量: {audio_stream.audio_quality.name}")

+ return video_stream.url, audio_stream.url

+

+ def _save_credential(self):

+ """存储哔哩哔哩登录凭证"""

+ if self._credential is None:

+ return

+

+ self._cookies_file.write_text(json.dumps(self._credential.get_cookies()))

+

+ def _load_credential(self):

+ """从文件加载哔哩哔哩登录凭证"""

+ if not self._cookies_file.exists():

+ return

+

+ self._credential = Credential.from_cookies(json.loads(self._cookies_file.read_text()))

+

+ async def login_with_qrcode(self) -> bytes:

+ """通过二维码登录获取哔哩哔哩登录凭证"""

+ self._qr_login = QrCodeLogin()

+ await self._qr_login.generate_qrcode()

+

+ qr_pic = self._qr_login.get_qrcode_picture()

+ return qr_pic.content

+

+ async def check_qr_state(self) -> AsyncGenerator[str, None]:

+ """检查二维码登录状态"""

+ scan_tip_pending = True

+

+ for _ in range(30):

+ state = await self._qr_login.check_state()

+ match state:

+ case QrCodeLoginEvents.DONE:

+ yield "登录成功"

+ self._credential = self._qr_login.get_credential()

+ self._save_credential()

+ break

+ case QrCodeLoginEvents.CONF:

+ if scan_tip_pending:

+ yield "二维码已扫描, 请确认登录"

+ scan_tip_pending = False

+ case QrCodeLoginEvents.TIMEOUT:

+ yield "二维码过期, 请重新生成"

+ break

+ await asyncio.sleep(2)

+ else:

+ yield "二维码登录超时, 请重新生成"

+

+ async def _init_credential(self):

+ """初始化哔哩哔哩登录凭证"""

+ if not self.bili_ck:

+ self._load_credential()

+ return

+

+ credential = Credential.from_cookies(ck2dict(self.bili_ck))

+ if await credential.check_valid():

+ logger.info(f"`parser_bili_ck` 有效, 保存到 {self._cookies_file}")

+ self._credential = credential

+ self._save_credential()

+ else:

+ logger.info(f"`parser_bili_ck` 已过期, 尝试从 {self._cookies_file} 加载")

+ self._load_credential()

+

+ @property

+ async def credential(self) -> Credential | None:

+ """哔哩哔哩登录凭证"""

+

+ if self._credential is None:

+ await self._init_credential()

+ return self._credential

+

+ if not await self._credential.check_valid():

+ logger.warning("哔哩哔哩凭证已过期, 请重新配置")

+ return None

+

+ if await self._credential.check_refresh():

+ logger.info("哔哩哔哩凭证需要刷新")

+ if self._credential.has_ac_time_value() and self._credential.has_bili_jct():

+ await self._credential.refresh()

+ logger.info(f"哔哩哔哩凭证刷新成功, 保存到 {self._cookies_file}")

+ self._save_credential()

+ else:

+ logger.warning("哔哩哔哩凭证刷新需要包含 `SESSDATA`, `ac_time_value` 项")

+

+ return self._credential

diff --git a/core/parsers/bilibili/article.py b/core/parsers/bilibili/article.py

new file mode 100644

index 0000000..3126763

--- /dev/null

+++ b/core/parsers/bilibili/article.py

@@ -0,0 +1,118 @@

+"""Bilibili 专栏文章解析器"""

+

+from collections.abc import Generator

+from typing import Any

+

+from msgspec import Struct

+

+

+class TextNode(Struct):

+ """文本节点"""

+

+ text: str

+

+

+class ImageNode(Struct):

+ """图片节点"""

+

+ url: str

+ alt: str | None = None

+

+

+class Author(Struct):

+ """作者信息"""

+

+ mid: int

+ name: str

+ face: str

+ fans: int

+ level: int

+

+

+class Stats(Struct):

+ """统计信息"""

+

+ view: int

+ favorite: int

+ like: int

+ reply: int

+ share: int

+ coin: int

+

+

+class Meta(Struct):

+ """文章元信息"""

+

+ id: int

+ title: str

+ summary: str

+ publish_time: int

+ author: Author

+ stats: Stats

+ tags: list[dict[str, Any]]

+ words: int

+

+

+class ArticleInfo(Struct):

+ """文章信息"""

+

+ type: str

+ meta: Meta

+ children: list[dict[str, Any]]

+

+ def gen_text_img(self) -> Generator[TextNode | ImageNode, None, None]:

+ """生成文本和图片节点(保持顺序)"""

+ for child in self.children:

+ if child.get("type") == "ParagraphNode":

+ # 处理段落节点,提取所有文本内容

+ text_content = self._extract_text_from_children(child.get("children", []))

+ text_content = text_content.strip()

+ if text_content:

+ yield TextNode(text="\n\n" + text_content)

+ elif child.get("type") == "ImageNode":

+ # 处理图片节点

+ yield ImageNode(url=child.get("url", ""), alt=child.get("alt"))

+ elif child.get("type") == "VideoCardNode":

+ # 处理视频卡片节点(转换为文本描述)

+ yield TextNode(text=f"\n [视频卡片: {child.get('aid', 0)}]")

+

+ def _extract_text_from_children(self, children: list[dict[str, Any]]) -> str:

+ """从子节点列表中提取文本内容"""

+ text_content = ""

+ for child in children:

+ if child.get("type") == "TextNode":

+ text_content += child.get("text", "")

+ elif child.get("type") in ["BoldNode", "FontSizeNode", "ColorNode"]:

+ # 递归处理嵌套节点

+ text_content += self._extract_text_from_children(child.get("children", []))

+ return text_content

+

+ @property

+ def author_info(self) -> tuple[str, str]:

+ """获取作者信息"""

+ return self.meta.author.name, self.meta.author.face

+

+ @property

+ def title(self) -> str:

+ """获取标题"""

+ return self.meta.title

+

+ @property

+ def timestamp(self) -> int:

+ """获取发布时间戳"""

+ return self.meta.publish_time

+

+ @property

+ def summary(self) -> str:

+ """获取摘要"""

+ return self.meta.summary

+

+ @property

+ def stats(self) -> Stats:

+ """获取统计信息"""

+ return self.meta.stats

+

+ @property

+ def tags(self) -> list[str]:

+ """获取标签列表"""

+ return [tag.get("name", "") for tag in self.meta.tags]

diff --git a/core/parsers/bilibili/common.py b/core/parsers/bilibili/common.py

new file mode 100644

index 0000000..3ab328b

--- /dev/null

+++ b/core/parsers/bilibili/common.py

@@ -0,0 +1,10 @@

+from msgspec import Struct

+

+

+class Upper(Struct):

+ mid: int

+ """用户 ID"""

+ name: str

+ """作者"""

+ face: str

+ """头像"""

diff --git a/core/parsers/bilibili/dynamic.py b/core/parsers/bilibili/dynamic.py

new file mode 100644

index 0000000..40e2eef

--- /dev/null

+++ b/core/parsers/bilibili/dynamic.py

@@ -0,0 +1,197 @@

+from typing import Any

+

+from msgspec import Struct, convert

+

+

+class AuthorInfo(Struct):

+ """作者信息"""

+

+ name: str

+ face: str

+ mid: int

+ pub_time: str

+ pub_ts: int

+ # jump_url: str

+ # following: bool = False

+ # official_verify: dict[str, Any] | None = None

+ # vip: dict[str, Any] | None = None

+ # pendant: dict[str, Any] | None = None

+

+

+class VideoArchive(Struct):

+ """视频信息"""

+

+ aid: str

+ bvid: str

+ title: str

+ desc: str

+ cover: str

+ # duration_text: str

+ # jump_url: str

+ # stat: dict[str, str]

+ # badge: dict[str, Any] | None = None

+

+

+class OpusImage(Struct):

+ """图文动态图片信息"""

+

+ url: str

+ # width: int

+ # height: int

+ # size: float

+ # aigc: dict[str, Any] | None = None

+ # live_url: str | None = None

+

+

+class OpusSummary(Struct):

+ """图文动态摘要"""

+

+ text: str

+ # rich_text_nodes: list[dict[str, Any]]

+

+

+class OpusContent(Struct):

+ """图文动态内容"""

+

+ jump_url: str

+ pics: list[OpusImage]

+ summary: OpusSummary

+ title: str | None = None

+ # fold_action: list[str] | None = None

+

+

+class DynamicMajor(Struct):

+ """动态主要内容"""

+

+ type: str

+ archive: VideoArchive | None = None

+ opus: OpusContent | None = None

+

+ @property

+ def title(self) -> str | None:

+ """获取标题"""

+ if self.type == "MAJOR_TYPE_ARCHIVE" and self.archive:

+ return self.archive.title

+ return None

+

+ @property

+ def text(self) -> str | None:

+ """获取文本内容"""

+ if self.type == "MAJOR_TYPE_ARCHIVE" and self.archive:

+ return self.archive.desc

+ elif self.type == "MAJOR_TYPE_OPUS" and self.opus:

+ return self.opus.summary.text

+ return None

+

+ @property

+ def image_urls(self) -> list[str]:

+ """获取图片URL列表"""

+ if self.type == "MAJOR_TYPE_OPUS" and self.opus:

+ return [pic.url for pic in self.opus.pics]

+ elif self.type == "MAJOR_TYPE_ARCHIVE" and self.archive and self.archive.cover:

+ return [self.archive.cover]

+ return []

+

+ @property

+ def cover_url(self) -> str | None:

+ """获取封面URL"""

+ if self.type == "MAJOR_TYPE_ARCHIVE" and self.archive:

+ return self.archive.cover

+ return None

+

+

+class DynamicModule(Struct):

+ """动态模块"""

+

+ module_author: AuthorInfo

+ module_dynamic: dict[str, Any] | None = None

+ module_stat: dict[str, Any] | None = None

+

+ @property

+ def author_name(self) -> str:

+ """获取作者名称"""

+ return self.module_author.name

+

+ @property

+ def author_face(self) -> str:

+ """获取作者头像URL"""

+ return self.module_author.face

+

+ @property

+ def pub_ts(self) -> int:

+ """获取发布时间戳"""

+ return self.module_author.pub_ts

+

+ @property

+ def major_info(self) -> dict[str, Any] | None:

+ """获取主要内容信息"""

+ if self.module_dynamic:

+ return self.module_dynamic.get("major")

+ return None

+

+

+class DynamicInfo(Struct):

+ """动态信息"""

+

+ id_str: str

+ type: str

+ visible: bool

+ modules: DynamicModule

+ basic: dict[str, Any] | None = None

+

+ @property

+ def name(self) -> str:

+ """获取作者名称"""

+ return self.modules.author_name

+

+ @property

+ def avatar(self) -> str:

+ """获取作者头像URL"""

+ return self.modules.author_face

+

+ @property

+ def timestamp(self) -> int:

+ """获取发布时间戳"""

+ return self.modules.pub_ts

+

+ @property

+ def title(self) -> str | None:

+ """获取标题"""

+ major_info = self.modules.major_info

+ if major_info:

+ major = convert(major_info, DynamicMajor)

+ return major.title

+ return None

+

+ @property

+ def text(self) -> str | None:

+ """获取文本内容"""

+ major_info = self.modules.major_info

+ if major_info:

+ major = convert(major_info, DynamicMajor)

+ return major.text

+ return None

+

+ @property

+ def image_urls(self) -> list[str]:

+ """获取图片URL列表"""

+ major_info = self.modules.major_info

+ if major_info:

+ major = convert(major_info, DynamicMajor)

+ return major.image_urls

+ return []

+

+ @property

+ def cover_url(self) -> str | None:

+ """获取封面URL"""

+ major_info = self.modules.major_info

+ if major_info:

+ major = convert(major_info, DynamicMajor)

+ return major.cover_url

+ return None

+

+

+class DynamicItem(Struct):

+ """动态项目"""

+

+ item: DynamicInfo

diff --git a/core/parsers/bilibili/favlist.py b/core/parsers/bilibili/favlist.py

new file mode 100644

index 0000000..823deca

--- /dev/null

+++ b/core/parsers/bilibili/favlist.py

@@ -0,0 +1,66 @@

+from msgspec import Struct

+

+from .common import Upper

+

+

+class FavItem(Struct):

+ title: str

+ cover: str

+ intro: str

+ link: str

+

+ @property

+ def url(self) -> str:

+ """完整链接"""

+ return self.link.replace("bilibili://video/", "https://bilibili.com/video/av")

+

+ @property

+ def desc(self) -> str:

+ """描述"""

+ return f"标题: {self.title}\n简介: {self.intro}\n链接: {self.url}"

+

+ @property

+ def avid(self) -> int:

+ """avid"""

+ return int(self.link.split("/")[-1])

+

+

+class FavInfo(Struct):

+ # id: int

+ # fid: int

+ # mid: int

+ title: str

+ """标题"""

+ cover: str

+ """封面"""

+ upper: Upper

+ """up 主信息"""

+ ctime: int

+ """创建时间戳"""

+ mtime: int

+ """修改时间戳"""

+ media_count: int

+ """媒体数量"""

+ intro: str

+ """简介"""

+

+

+class FavData(Struct):

+ info: FavInfo

+ medias: list[FavItem]

+

+ @property

+ def title(self) -> str:

+ return f"收藏夹 - {self.info.title}"

+

+ @property

+ def cover(self) -> str:

+ return self.info.cover

+

+ @property

+ def desc(self) -> str:

+ return f"简介: {self.info.intro}"

+

+ @property

+ def timestamp(self) -> int:

+ return self.info.ctime

diff --git a/core/parsers/bilibili/live.py b/core/parsers/bilibili/live.py

new file mode 100644

index 0000000..25bbd81

--- /dev/null

+++ b/core/parsers/bilibili/live.py

@@ -0,0 +1,72 @@

+from msgspec import Struct

+

+

+class RoomInfo(Struct):

+ title: str

+ """标题"""

+ cover: str

+ """封面"""

+ keyframe: str

+ """关键帧"""

+ tags: str

+ """标签"""

+ area_name: str

+ """分区名称"""

+ parent_area_name: str

+ """父分区名称"""

+

+

+class BaseInfo(Struct):

+ uname: str

+ """用户名"""

+ face: str

+ """头像"""

+ gender: str

+ """性别"""

+

+

+class LiveInfo(Struct):

+ level: int

+ """等级"""

+ level_color: int

+ """等级颜色"""

+ score: int

+ """分数"""

+

+

+class AnchorInfo(Struct):

+ base_info: BaseInfo

+ """基础信息"""

+ live_info: LiveInfo

+ """直播信息"""

+

+

+class RoomData(Struct):

+ room_info: RoomInfo

+ """房间信息"""

+ anchor_info: AnchorInfo

+ """主播信息"""

+

+ @property

+ def title(self) -> str:

+ return f"直播 - {self.room_info.title}"

+

+ @property

+ def cover(self) -> str:

+ return self.room_info.cover

+

+ @property

+ def detail(self) -> str:

+ return f"分区: {self.room_info.area_name} | {self.room_info.parent_area_name}\n标签: {self.room_info.tags}"

+

+ @property

+ def keyframe(self) -> str:

+ return self.room_info.keyframe

+

+ @property

+ def name(self) -> str:

+ return self.anchor_info.base_info.uname

+

+ @property

+ def avatar(self) -> str:

+ return self.anchor_info.base_info.face

diff --git a/core/parsers/bilibili/opus.py b/core/parsers/bilibili/opus.py

new file mode 100644

index 0000000..6ffc695

--- /dev/null

+++ b/core/parsers/bilibili/opus.py

@@ -0,0 +1,153 @@

+from collections.abc import Generator

+from typing import Any

+

+from msgspec import Struct

+

+

+class TextNode(Struct, tag="TextNode"):

+ """图文动态文本节点"""

+

+ text: str

+ """文本内容"""

+

+

+class ImageNode(Struct, tag="ImageNode"):

+ """图文动态图片节点"""

+

+ url: str

+ """图片链接"""

+ alt: str | None = None

+ """图片描述"""

+

+

+class Author(Struct):

+ """图文动态作者信息"""

+

+ name: str

+ face: str

+ mid: int

+ pub_time: str

+ pub_ts: int

+

+

+class Image(Struct):

+ """图文动态图片信息"""

+

+ url: str

+ # width: int

+ # height: int

+ # size: float

+

+

+class Pic(Struct):

+ """图文动态图片组"""

+

+ pics: list[Image]

+ style: int

+

+

+class Text(Struct):

+ """图文动态文本"""

+

+ nodes: list[dict[str, Any]]

+

+

+class Paragraph(Struct):

+ """图文动态段落"""

+

+ para_type: int

+ text: Text | None = None

+ pic: Pic | None = None

+ # align: int = 0

+ # format: dict[str, Any] | None = None

+

+

+class Content(Struct):

+ """图文动态内容"""

+

+ paragraphs: list[Paragraph]

+

+

+class Stat(Struct):

+ """图文动态统计"""

+

+ like: dict[str, Any] | None = None

+ comment: dict[str, Any] | None = None

+ forward: dict[str, Any] | None = None

+ favorite: dict[str, Any] | None = None

+ coin: dict[str, Any] | None = None

+

+

+class Module(Struct):

+ """图文动态模块"""

+

+ module_type: str

+ module_author: Author | None = None

+ module_content: Content | None = None

+ # module_stat: OpusStat | None = None

+

+

+class Basic(Struct):

+ """图文动态基本信息"""

+

+ title: str

+

+

+class Info(Struct):

+ """图文动态信息"""

+

+ id_str: str

+ type: int

+ modules: list[Module]

+ basic: Basic | None = None

+

+

+class OpusItem(Struct):

+ """图文动态项目"""

+

+ item: Info

+

+ @property

+ def title(self) -> str | None:

+ return self.item.basic.title if self.item.basic else None

+

+ @property

+ def name_avatar(self) -> tuple[str, str]:

+ author_module = next(module.module_author for module in self.item.modules if module.module_author)

+ return author_module.name, author_module.face

+

+ @property

+ def timestamp(self) -> int | None:

+ """获取发布时间戳"""

+ for module in self.item.modules:

+ if module.module_type == "MODULE_TYPE_AUTHOR" and module.module_author:

+ return module.module_author.pub_ts

+ return None

+

+ def gen_text_img(self) -> Generator[TextNode | ImageNode, None, None]:

+ """生成图文节点(保持顺序)"""

+ for module in self.item.modules:

+ if module.module_type == "MODULE_TYPE_CONTENT" and module.module_content:

+ for paragraph in module.module_content.paragraphs:

+ # 处理文本段落

+ if paragraph.text and paragraph.text.nodes:

+ text_content = self._extract_text_from_nodes(paragraph.text.nodes)

+ text_content = text_content.strip()

+ if text_content:

+ yield TextNode(text="\n\n" + text_content)

+

+ # 处理图片段落

+ if paragraph.pic and paragraph.pic.pics:

+ for pic in paragraph.pic.pics:

+ yield ImageNode(url=pic.url)

+

+ def _extract_text_from_nodes(self, nodes: list[dict[str, Any]]) -> str:

+ """从节点列表中提取文本内容"""

+ text_content = ""

+ for node in nodes:

+ if node.get("type") in [

+ "TEXT_NODE_TYPE_WORD",

+ "TEXT_NODE_TYPE_RICH",

+ ] and node.get("word"):

+ text_content += node["word"].get("words", "")

+ return text_content

diff --git a/core/parsers/bilibili/video.py b/core/parsers/bilibili/video.py

new file mode 100644

index 0000000..3740a72

--- /dev/null

+++ b/core/parsers/bilibili/video.py

@@ -0,0 +1,140 @@

+from dataclasses import dataclass

+

+from msgspec import Struct

+

+from .common import Upper

+

+

+class Stats(Struct):

+ view: int

+ """播放量"""

+ danmaku: int

+ """弹幕数"""

+ reply: int

+ """回复数"""

+ favorite: int

+ """收藏数"""

+ coin: int

+ """硬币数"""

+ share: int

+ """分享数"""

+ like: int

+ """点赞数"""

+

+

+class Page(Struct):

+ part: str

+ """分集标题"""

+ ctime: int

+ """创建时间戳"""

+ duration: int

+ """时长"""

+ first_frame: str | None = None

+ """封面图片"""

+

+

+@dataclass(frozen=True, slots=True)

+class PageInfo:

+ index: int

+ title: str

+ duration: int

+ timestamp: int

+ cover: str | None = None

+

+

+class VideoInfo(Struct):

+ bvid: str

+ """bvid"""

+ title: str

+ """标题"""

+ desc: str

+ """简介"""

+ duration: int

+ """时长"""

+ owner: Upper

+ """作者信息"""

+ stat: Stats

+ """统计信息"""

+ pubdate: int

+ """公开时间戳"""

+ ctime: int

+ """创建时间戳"""

+ pic: str | None = None

+ """封面图片"""

+ pages: list[Page] | None = None

+ """分集信息"""

+

+ @property

+ def title_with_part(self) -> str:

+ if self.pages and len(self.pages) > 1:

+ return f"{self.title} - {self.pages[0].part}"

+ return self.title

+

+ @property

+ def formatted_stats_info(self) -> str:

+ """

+ 格式化视频信息

+ """

+ # 定义需要展示的数据及其显示名称

+ stats_mapping = [

+ ("👍", self.stat.like),

+ ("🪙", self.stat.coin),

+ ("⭐", self.stat.favorite),

+ ("↩️", self.stat.share),

+ ("💬", self.stat.reply),

+ ("👀", self.stat.view),

+ ("💭", self.stat.danmaku),

+ ]

+

+ # 构建结果字符串

+ result_parts = []

+ for display_name, value in stats_mapping:

+ # 数值超过10000时转换为万为单位

+ formatted_value = f"{value / 10000:.1f}万" if value > 10000 else str(value)

+ result_parts.append(f"{display_name} {formatted_value}")

+

+ return " ".join(result_parts)

+

+ def extract_info_with_page(self, page_num: int = 1) -> PageInfo:

+ """获取视频信息,包含页索引、标题、时长、封面

+ Args:

+ page_num (int): 页索引. Defaults to 1.

+

+ Returns:

+ tuple[int, str, int, str | None]: 页索引、标题、时长、封面

+ """

+ page_idx = page_num - 1

+ title = self.title

+ duration = self.duration

+ cover = self.pic

+ timestamp = self.pubdate

+

+ if self.pages and len(self.pages) > 1:

+ page_idx = page_idx % len(self.pages)

+ page = self.pages[page_idx]

+ title += f" | 分集 - {page.part}"

+ duration = page.duration

+ cover = page.first_frame

+ timestamp = page.ctime

+

+ return PageInfo(

+ index=page_idx,

+ title=title,

+ duration=duration,

+ timestamp=timestamp,

+ cover=cover,

+ )

+

+

+class ModelResult(Struct):

+ summary: str

+

+

+class AIConclusion(Struct):

+ model_result: ModelResult | None = None

+

+ @property

+ def summary(self) -> str: