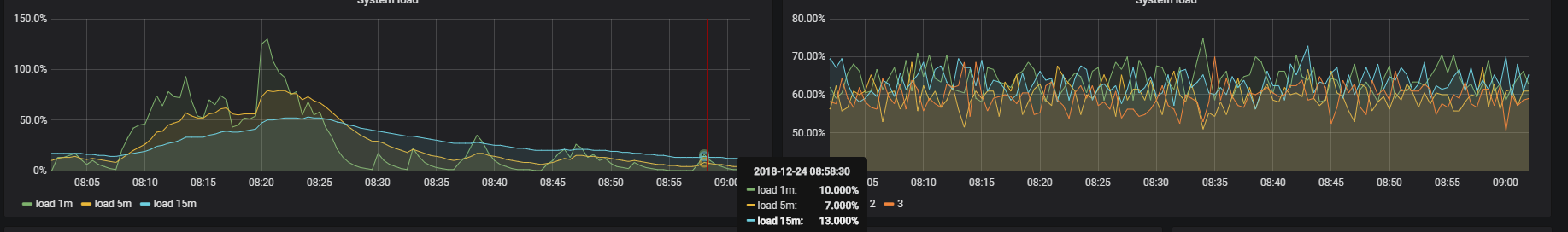

发现grafana读取Prometheus的数据超时了,排查后发现Prometheus磁盘空间不足,导致pod crash后一直在重启。

[root@a-docker-cluster01 ~]# kubectl -n monitoring get po -o wide

NAME READY STATUS RESTARTS AGE IP NODE

......

prometheus-wrinkled-gibbon-prometheus-prometheus-0 2/3 CrashLoopBackOff 108 132d 10.233.71.69 a-docker-cluster02

[root@a-docker-cluster01 ~]# kubectl -n monitoring logs prometheus-wrinkled-gibbon-prometheus-prometheus-0 prometheus

......

level=info ts=2018-12-23T13:28:30.866076641Z caller=main.go:608 msg="Notifier manager stopped"

level=error ts=2018-12-23T13:28:30.866158699Z caller=main.go:617 err="opening storage failed: zero-pad torn page: write /prometheus/wal/00000138: no space left on device"

看到这里只申请5G的存储空间,前面运行一直没问题,但是这周加了好几个监控指标,大量的抓取数据导致爆盘了。

只能怪当初没事先做好容量规划,欠的债始终是要还的。我们来看看能否动态扩容呢?我们来看看之前ceph rbd的定义。

[root@a-docker-cluster01 ~]# kubectl get storageclass rbd -o yaml

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: rbd

parameters:

adminId: admin

adminSecretName: ceph-admin-secret

adminSecretNamespace: kube-system

imageFeatures: layering

imageFormat: "2"

monitors: 170.0.0.1:6789

pool: kube

userId: admin

userSecretName: ceph-admin-secret

provisioner: ceph.com/rbd

reclaimPolicy: Delete

遗憾的是,当初出于某些原因没有配置动态扩容。那么要怎么处理呢?其实办法还是有的,手动来扩容rbd块,步骤如下:

1、获取rbd镜像

2、扩容rbd镜像

3、更新pvc定义

首先,我们来获取rbd镜像。

- 获取pvc列表

[root@a-docker-cluster01 ~]# kubectl -n monitoring get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

alertmanager-wrinkled-gibbon-prometheus-alertmanager-db-alertmanager-wrinkled-gibbon-prometheus-alertmanager-0 Bound pvc-18aaa76f-04f3-11e9-8438-00163e086b4d 5Gi RWO rbd 132d

prometheus-wrinkled-gibbon-prometheus-prometheus-db-prometheus-wrinkled-gibbon-prometheus-prometheus-0 Bound pvc-1cabd371-04f3-11e9-8438-00163e086b4d 5Gi RWO rbd 132d

- 获取pvc详细信息

[root@a-docker-cluster01 ~]# kubectl -n monitoring get pvc prometheus-wrinkled-gibbon-prometheus-prometheus-db-prometheus-wrinkled-gibbon-prometheus-prometheus-0 -o yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

annotations:

volume.beta.kubernetes.io/storage-provisioner: ceph.com/rbd

finalizers:

- kubernetes.io/pvc-protection

labels:

app: prometheus

prometheus: wrinkled-gibbon-prometheus-prometheus

name: prometheus-wrinkled-gibbon-prometheus-prometheus-db-prometheus-wrinkled-gibbon-prometheus-prometheus-0

namespace: monitoring

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 5Gi

storageClassName: rbd

volumeName: pvc-1cabd371-04f3-11e9-8438-00163e086b4d

status:

accessModes:

- ReadWriteOnce

capacity:

storage: 5Gi

phase: Bound

- 获取卷信息

[root@a-docker-cluster01 ~]# kubectl get pv pvc-1cabd371-04f3-11e9-8438-00163e086b4d -o yaml

apiVersion: v1

kind: PersistentVolume

.....

image: kubernetes-dynamic-pvc-59e33f2a-04f3-11e9-8122-86bd83a719ac

image: kubernetes-dynamic-pvc-xxxx就是rbd镜像的名字

其次,扩容rbd镜像

- 直接在ansible控制机执行远程shell,查询pod所在主机的设备挂载信息

[root@a-docker-cluster01 ~]# ssh a-docker-cluster02 'df -h | grep 59e33f2a-04f3-11e9-8122-86bd83a719ac'

/dev/rbd0 4.8G 4.6G 0 100% /var/lib/kubelet/plugins/kubernetes.io/rbd/mounts/kube-image-kubernetes-dynamic-pvc-59e33f2a-04f3-11e9-8122-86bd83a719ac

发现,的确也是存储空间满了。

- 扩容rbd镜像, 也就是扩容pvc

[root@a-docker-cluster01 ~]# rbd resize --size 20480 kube/kubernetes-dynamic-pvc-59e33f2a-04f3-11e9-8122-86bd83a719ac

Resizing image: 100% complete...done.

- 扩容挂载分区容量

[root@a-docker-cluster01 ~]# ssh a-docker-cluster02 'resize2fs /dev/rbd0'

resize2fs 1.42.9 (28-Dec-2013)

Filesystem at /dev/rbd0 is mounted on /var/lib/kubelet/plugins/kubernetes.io/rbd/mounts/kube-image-kubernetes-dynamic-pvc-59e33f2a-04f3-11e9-8122-86bd83a719ac; on-line resizing required

old_desc_blocks = 1, new_desc_blocks = 2

The filesystem on /dev/rbd0 is now 5242880 blocks long.

- 再次验证rbd容量

[root@a-docker-cluster01 ~]# ssh a-docker-cluster02 'df -h | grep 59e33f2a-04f3-11e9-8122-86bd83a719ac'

/dev/rbd0 20G 5.3G 14.7G 27% /var/lib/kubelet/plugins/kubernetes.io/rbd/mounts/kube-image-kubernetes-dynamic-pvc-59e33f2a-04f3-11e9-8122-86bd83a719ac

我们发现,扩容成功,容量变成了20G。

最后,更新定义

因为 kubernetes 并不会感知到 rbd 的变化,因此需要手动修正 rbd 大小的显示信息,将pv的容量设置成20G。

[root@a-docker-cluster01 prometheus]# kubectl get pv -n monitoring

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

pvc-18aaa76f-04f3-11e9-8438-00163e086b4d 5Gi RWO Delete Bound monitoring/alertmanager-wrinkled-gibbon-prometheus-alertmanager-db-alertmanager-wrinkled-gibbon-prometheus-alertmanager-0 rbd 132d

pvc-1cabd371-04f3-11e9-8438-00163e086b4d 5Gi RWO Delete Bound monitoring/prometheus-wrinkled-gibbon-prometheus-prometheus-db-prometheus-wrinkled-gibbon-prometheus-prometheus-0 rbd 132d

[root@a-docker-cluster01 prometheus]# kubectl -n monitoring edit pv pvc-1cabd371-04f3-11e9-8438-00163e086b4d

persistentvolume "pvc-1cabd371-04f3-11e9-8438-00163e086b4d" edited

[root@a-docker-cluster01 prometheus]# kubectl get pv -n monitoring

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

pvc-18aaa76f-04f3-11e9-8438-00163e086b4d 5Gi RWO Delete Bound monitoring/alertmanager-wrinkled-gibbon-prometheus-alertmanager-db-alertmanager-wrinkled-gibbon-prometheus-alertmanager-0 rbd 132d

pvc-1cabd371-04f3-11e9-8438-00163e086b4d 20Gi RWO Delete Bound monitoring/prometheus-wrinkled-gibbon-prometheus-prometheus-db-prometheus-wrinkled-gibbon-prometheus-prometheus-0 rbd 132d

另外再将Prometheus默认存储时间从15d降低至3d,并升级helm。

注:

1、v1.9.2版本无法动态更改pvc声明,只能更改PV定义。

2、实际上k8s在v1.11以上版本中,已经实现pvc自动扩容,并且默认激活。

发现grafana读取Prometheus的数据超时了,排查后发现Prometheus磁盘空间不足,导致pod crash后一直在重启。

看到这里只申请5G的存储空间,前面运行一直没问题,但是这周加了好几个监控指标,大量的抓取数据导致爆盘了。

只能怪当初没事先做好容量规划,欠的债始终是要还的。我们来看看能否动态扩容呢?我们来看看之前ceph rbd的定义。

遗憾的是,当初出于某些原因没有配置动态扩容。那么要怎么处理呢?其实办法还是有的,手动来扩容rbd块,步骤如下:

1、获取rbd镜像

2、扩容rbd镜像

3、更新pvc定义

首先,我们来获取rbd镜像。

其次,扩容rbd镜像

发现,的确也是存储空间满了。

我们发现,扩容成功,容量变成了20G。

最后,更新定义

因为 kubernetes 并不会感知到 rbd 的变化,因此需要手动修正 rbd 大小的显示信息,将pv的容量设置成20G。

另外再将Prometheus默认存储时间从15d降低至3d,并升级helm。

注:

1、v1.9.2版本无法动态更改pvc声明,只能更改PV定义。

2、实际上k8s在v1.11以上版本中,已经实现pvc自动扩容,并且默认激活。