-

Notifications

You must be signed in to change notification settings - Fork 19

Description

System information

- OS Platform and Distribution:

macOS Catalina 10.15.7 - Tensorflow version:

1.14.0 - Python version:

3.7 - No CUDA since I'm using Macbook

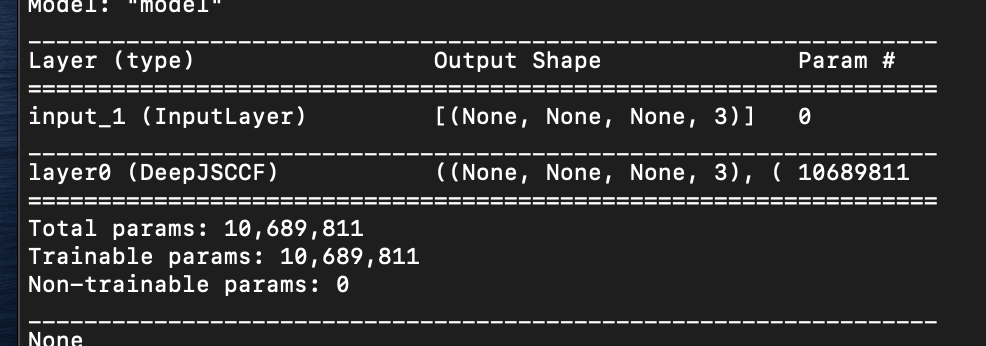

Hi I am a college student trying to run and study your jscc-f code.

Tried to run jscc.py, with python jscc.py --conv_depth=16 --n_layers but in model.fit()

The following error occurred.

ValueError: Variable <tf.Variable 'layer0/encoder/layer_0/kernel_rdft:0' shape=(81, 768) dtype=float32> has None for gradient. Please make sure that all of your ops have a gradient defined (i.e. are differentiable). Common ops without gradient: K.argmax, K.round, K.eval.

FULL LOG

WARNING:tensorflow:From /Users/parkbumsu/opt/anaconda3/lib/python3.7/site-packages/tensorflow/python/ops/math_grad.py:1250: add_dispatch_support..wrapper (from tensorflow.python.ops.array_ops) is deprecated and will be removed in a future version.

Instructions for updating:

Use tf.where in 2.0, which has the same broadcast rule as np.where

Traceback (most recent call last):

File "jscc.py", line 925, in

main(args)

File "jscc.py", line 690, in main

DATASETS[args.dataset_train]._NUM_IMAGES["validation"]

File "/Users/parkbumsu/opt/anaconda3/lib/python3.7/site-packages/tensorflow/python/keras/engine/training.py", line 694, in fit

initial_epoch=initial_epoch)

File "/Users/parkbumsu/opt/anaconda3/lib/python3.7/site-packages/tensorflow/python/keras/engine/training.py", line 1433, in fit_generator

steps_name='steps_per_epoch')

File "/Users/parkbumsu/opt/anaconda3/lib/python3.7/site-packages/tensorflow/python/keras/engine/training_generator.py", line 264, in model_iteration

batch_outs = batch_function(*batch_data)

File "/Users/parkbumsu/opt/anaconda3/lib/python3.7/site-packages/tensorflow/python/keras/engine/training.py", line 1174, in train_on_batch

self._make_train_function()

File "/Users/parkbumsu/opt/anaconda3/lib/python3.7/site-packages/tensorflow/python/keras/engine/training.py", line 2219, in _make_train_function

params=self._collected_trainable_weights, loss=self.total_loss)

File "/Users/parkbumsu/opt/anaconda3/lib/python3.7/site-packages/tensorflow/python/keras/optimizer_v2/optimizer_v2.py", line 491, in get_updates

grads = self.get_gradients(loss, params)

File "/Users/parkbumsu/opt/anaconda3/lib/python3.7/site-packages/tensorflow/python/keras/optimizer_v2/optimizer_v2.py", line 398, in get_gradients

"K.argmax, K.round, K.eval.".format(param))

ValueError: Variable <tf.Variable 'layer0/encoder/layer_0/kernel_rdft:0' shape=(81, 768) dtype=float32> has None for gradient. Please make sure that all of your ops have a gradient defined (i.e. are differentiable). Common ops without gradient: K.argmax, K.round, K.eval.`

And the autotune option in the dataset option keeps getting errors, so I deleted it. Could this be the cause of the error? Thank you.