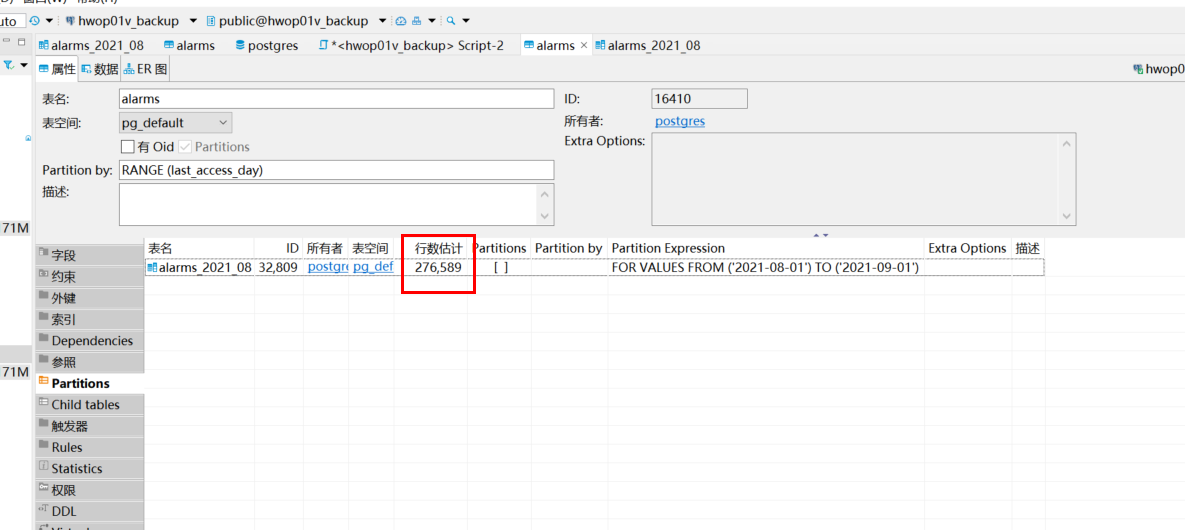

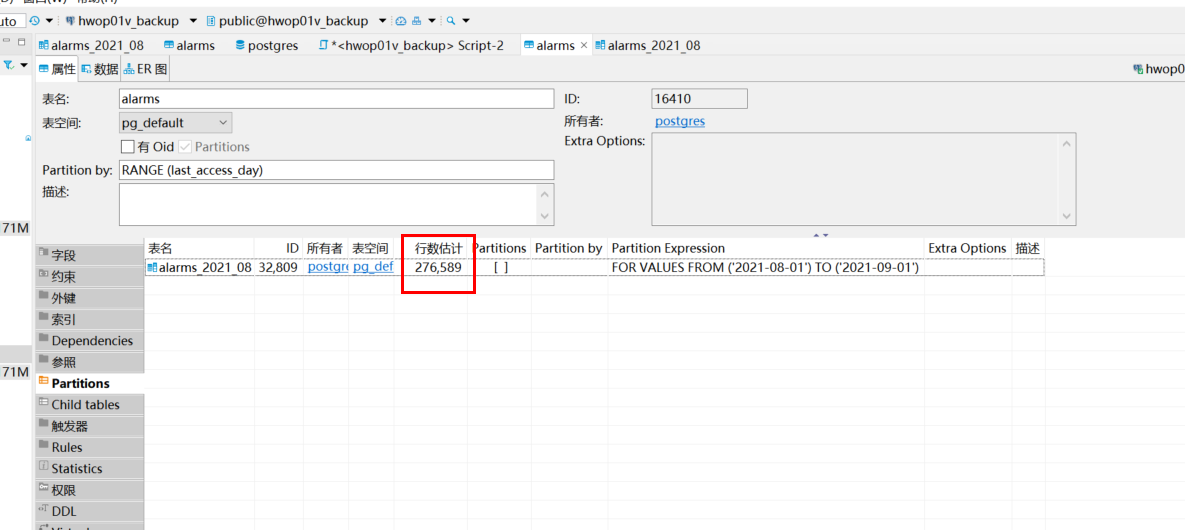

pg全量同步测试时,发现当数据大小超过一定大小。目前在20w+时,

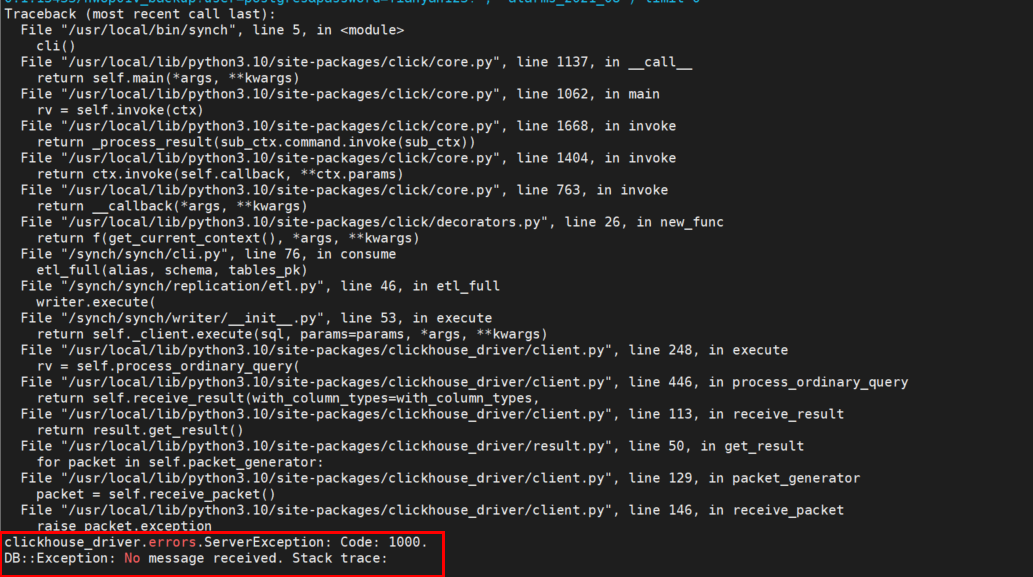

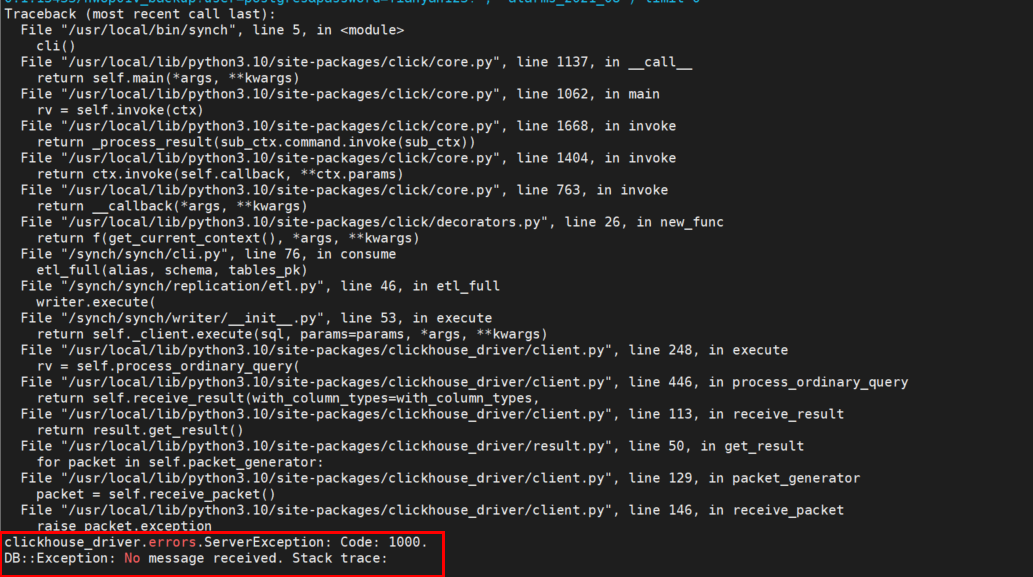

生产者会有如下报错

配置文件如下:

core:

debug: False # when set True, will display sql information.

insert_num: 2000 # how many num to submit,recommend set 20000 when production

insert_interval: 3 # how many seconds to submit,recommend set 60 when production

monitoring: false

redis:

host: redis

port: 16379

db: 0

password:

prefix: synch

sentinel: false # enable redis sentinel

sentinel_hosts: # redis sentinel hosts

- 127.0.0.1:5000

sentinel_master: master

queue_max_len: 200000 # stream max len, will delete redundant ones with FIFO

source_dbs:

- db_type: postgres

alias: postgres_db

broker_type: kafka # current support redis and kafka

host: 127.0.0.1

port: 15433

user: postgres

password: Tianyan123!

databases:

- database: hwop01v_backup

auto_create: true

tables:

- table: alarms_2021_08

auto_full_etl: true

clickhouse_engine: ReplacingMergeTree

sign_column: sign

version_column:

partition_by:

settings:

clickhouse:

# shard hosts when cluster, will insert by random

hosts:

- 127.0.0.1:19000

user: default

password: 'Tianyan123!'

cluster_name: # enable cluster mode when not empty, and hosts must be more than one if enable.

distributed_suffix: _all # distributed tables suffix, available in cluster

kafka:

servers:

- 127.0.0.1:9092

topic_prefix: synch劳烦帮忙看下!非常感谢!

pg全量同步测试时,发现当数据大小超过一定大小。目前在20w+时,

生产者会有如下报错

配置文件如下:

劳烦帮忙看下!非常感谢!