-

Notifications

You must be signed in to change notification settings - Fork 0

Description

When we do participant level model fitting, I recommend using a softmax choice rule that contains a temperature parameter which changes over trials rather than a fixed one. This will allow for random choice in the beginning of the task (reflecting exploration), and impression weighted choice towards the end (reflecting exploitation).

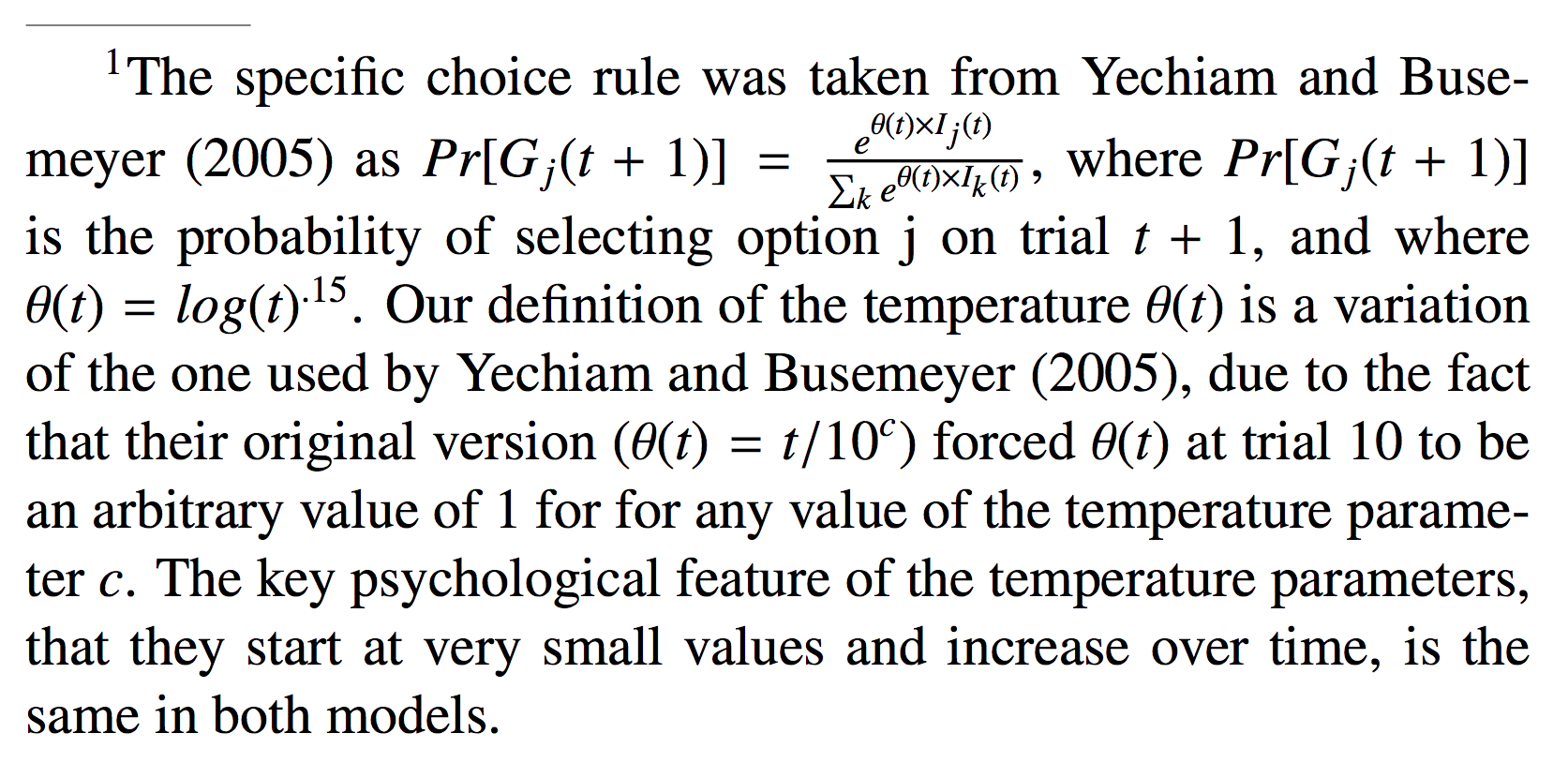

A common rule used by Yechiam and Busemeyer is to take the typical softmax, but instead of using a fixed temperature Theta, set Theta to be a function of trial. In Yechiam and Busemeyer (2005), they use Theta(t) = (t / 10) ^ c, where c is an individual level choice sensitivity parameter. However, when I don't like about this rule (see attached image) is that it forces Theta(t = 10) = 1. Instead, I suggest the rule Theta(t) = log(t) ^ c.