██████ ██████ ███████ ███ ██ ███████ ███ ███ ██ ████████ ██ ██ ██ ██ ██ ██ ██ ████ ██ ██ ████ ████ ██ ██ ██ ██ ██ ██ ██████ █████ ██ ██ ██ ███████ ██ ████ ██ ██ ██ ███████ ██ ██ ██ ██ ██ ██ ██ ██ ██ ██ ██ ██ ██ ██ ██ ██████ ██ ███████ ██ ████ ███████ ██ ██ ██ ██ ██ ██

The open-source, local-first alternative to LangSmith.

The open-source, local-first alternative to LangSmith.

opensmith is to LangSmith what Ollama is to OpenAI — the local-first, privacy-first alternative.

| LangSmith | opensmith | |

|---|---|---|

| Setup | Cloud account required | pip install opensmith |

| Data privacy | Sends traces to cloud | 100% local, SQLite only |

| Framework | Best with LangChain | Works with any Python code |

| Cost | Free tier then paid | Free forever, open source |

| Offline | No | Yes |

| Docker | No | No |

| Dashboard | Hosted | localhost:7823 |

LangSmith is powerful, but it is built around cloud-hosted tracing and is most natural inside the LangChain ecosystem. opensmith is a local-first alternative: install it with pip, use it with any Python LLM pipeline, and inspect traces on your machine without accounts, hosted services, Docker, or configuration. No trace data leaves your machine.

pip install opensmithOptional integrations:

pip install "opensmith[otel]"

pip install "opensmith[postgres]"

pip install "opensmith[all]"Use opensmith[all] to install both OpenTelemetry and Postgres support.

from opensmith import trace

@trace

def call_llm(prompt: str):

return openai.chat.completions.create(

model="gpt-4o-mini",

messages=[{"role": "user", "content": prompt}],

)

@trace

def my_pipeline(question: str):

# search_docs is your own retrieval function

docs = search_docs(question)

return call_llm(docs + question)Async functions are supported:

from opensmith import trace

@trace(tags=["production", "rag"])

async def call_llm(prompt: str):

return await openai.chat.completions.create(

model="gpt-4o-mini",

messages=[{"role": "user", "content": prompt}],

)Warn when a trace exceeds a token budget:

from opensmith import trace

@trace(token_budget=1000)

def my_pipeline():

return call_llm("summarize this document")from opensmith import trace

with trace("my_pipeline", tags=["debug"]) as t:

t.log("query", query)

response = openai.chat.completions.create(

model="gpt-4o-mini",

messages=[{"role": "user", "content": query}],

)

t.log("response", response)from opensmith import autopatch

autopatch()Patch only selected backends:

from opensmith import autopatch

autopatch(only=["openai"])Patch everything except selected backends:

from opensmith import autopatch

autopatch(exclude=["chromadb"])Print trace results to the terminal as they complete:

from opensmith import set_console_mode, trace

set_console_mode(True)

@trace

def my_func():

return "ok"opensmith reads opensmith.json from the current working directory on import:

Create a starter config:

opensmith init{

"db_path": "./my_traces.db",

"console_mode": false,

"autopatch": ["openai", "qdrant"]

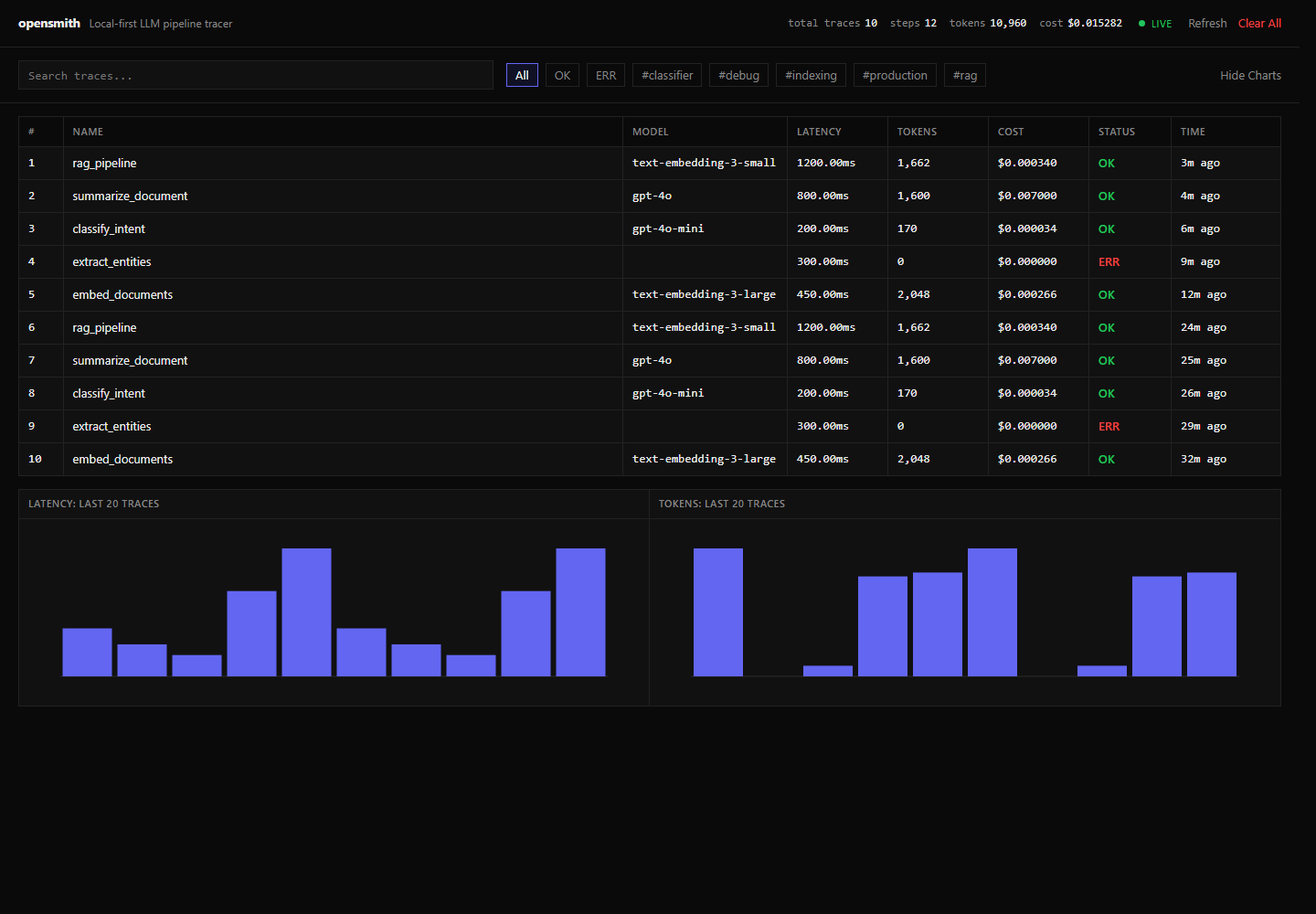

}opensmith uiOpen http://localhost:7823. If the port is already in use, opensmith automatically tries the next available port. Use --no-auto-port to disable this behavior.

| Command | Description |

|---|---|

opensmith init |

Create a starter opensmith.json config. |

opensmith ui |

Start the local dashboard with automatic port selection. |

opensmith traces --q rag --status err --tags production |

List and filter traces in the terminal. |

opensmith stats |

Show aggregate trace, step, token, and cost statistics. |

opensmith export |

Export traces to JSON or CSV. |

opensmith clear |

Delete all locally stored traces after confirmation. |

| Backend | Package | Status |

|---|---|---|

| openai | openai | ✅ |

| anthropic | anthropic | ✅ |

| litellm | litellm | ✅ |

| qdrant | qdrant-client | ✅ |

| chromadb | chromadb | ✅ |

| pinecone | pinecone-client | ✅ |

Traces are stored locally at ~/.opensmith/traces.db unless overridden with opensmith.json or set_default_db_path().

Use Postgres instead by installing opensmith[postgres] and setting OPENSMITH_DB_URL:

export OPENSMITH_DB_URL="postgresql://user:pass@localhost:5432/opensmith"Install both Postgres and OpenTelemetry support with pip install "opensmith[all]".

Export traces with nested steps as JSON:

opensmith export --format json --output traces.jsonExport a flat trace list as CSV:

opensmith export --format csv --output traces.csvOpenTelemetry export is disabled by default. Install the optional dependencies and set OPENSMITH_OTEL_ENDPOINT to enable OTLP HTTP export:

pip install "opensmith[otel]"

export OPENSMITH_OTEL_ENDPOINT="http://localhost:4318"opensmith sends traces to /v1/traces and metrics to /v1/metrics under that endpoint.

MIT