-

Notifications

You must be signed in to change notification settings - Fork 0

Testing the Project

Like any great project, there are a number of built-in methods to ensure the code is functioning as expected. This section describes how to use these testing features.

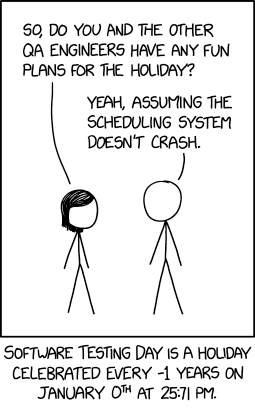

Image Source: xkcd #2928 by Randall Munroe CC-BY-NC 2.5

If you want to diagnose the program's behavior, you can run it in debug mode using the command make debug. This will run the simulation and generate additional logging output to help trace the program's execution. This includes values such as the generated Hamiltonian blocks/eigenvalues and eigenvectors/observables/etc. The program's console output will be copied to output/debug.log.

Tip

If running the simulation manually (i.e., not using the Makefile), you can enable debug mode by passing the --debug flag to the script.

Caution

This mode generates a LOT of output. It is recommend to only use it for small systems.

Caution

When running in debug mode, it is recommended to disable multi-threading, as it may jumble the output log, making context-ful statements hard to follow. If there is demand, we can investigate adding additional identifiers to the log messages to alleviate this issue. (See Multi-Threading)

Along with running your own tests, pre-built tests are included in the tests/ directory.

Currently this is the only included test. It runs the simulation on a 2x2 grid (4 sites) with periodic boundary conditions under a number of different parameters. The results are then compared against known-correct results and the resulting differences are reported. This test can be run using the command make test_grids.

The sample data for this test is contained in a number of zip files residing in the test directory. These contain folders which correspond to different parameter sets. Each folder contains CSV files generated by other trusted implementations of the Hubbard model.

Tip

These files can also be used as overlaying data onto plots generated by the main code. (See Overlaying CSV Data)

Rather than returning a simple pass/fail result, this test reports the average and maximum differences between the computed results and the sample data for each observable (merged across all parameter sets). This allows you to get a good idea of the accuracy at a glance. (Good results should be on the order of output/grids_test_results.csv. This can be useful for identifying specific test cases when investigating diverging results.

Note

Due to numerical limitations, the computed results tend to diverge to NaN for extreme values. By default, this test ignores any NaN values it encounters (because they do not provide useful information and would cause all the results to become NaN). If you are concerned about this behavior, you can open the test script located at tests/grids/TestGrids.jl and change the warn_on_nan variable at the top of @main() (line 21 as of writing) to true. This will cause the test to print out a list of all values containing NaNs.

[!CAUTION] Note to myself: This behavior was not correctly updated when we implemented multi-temperature support. The current logging behavior is not well-defined (hence why the last sentence above kind-of trails off) and should be fixed. TODO.